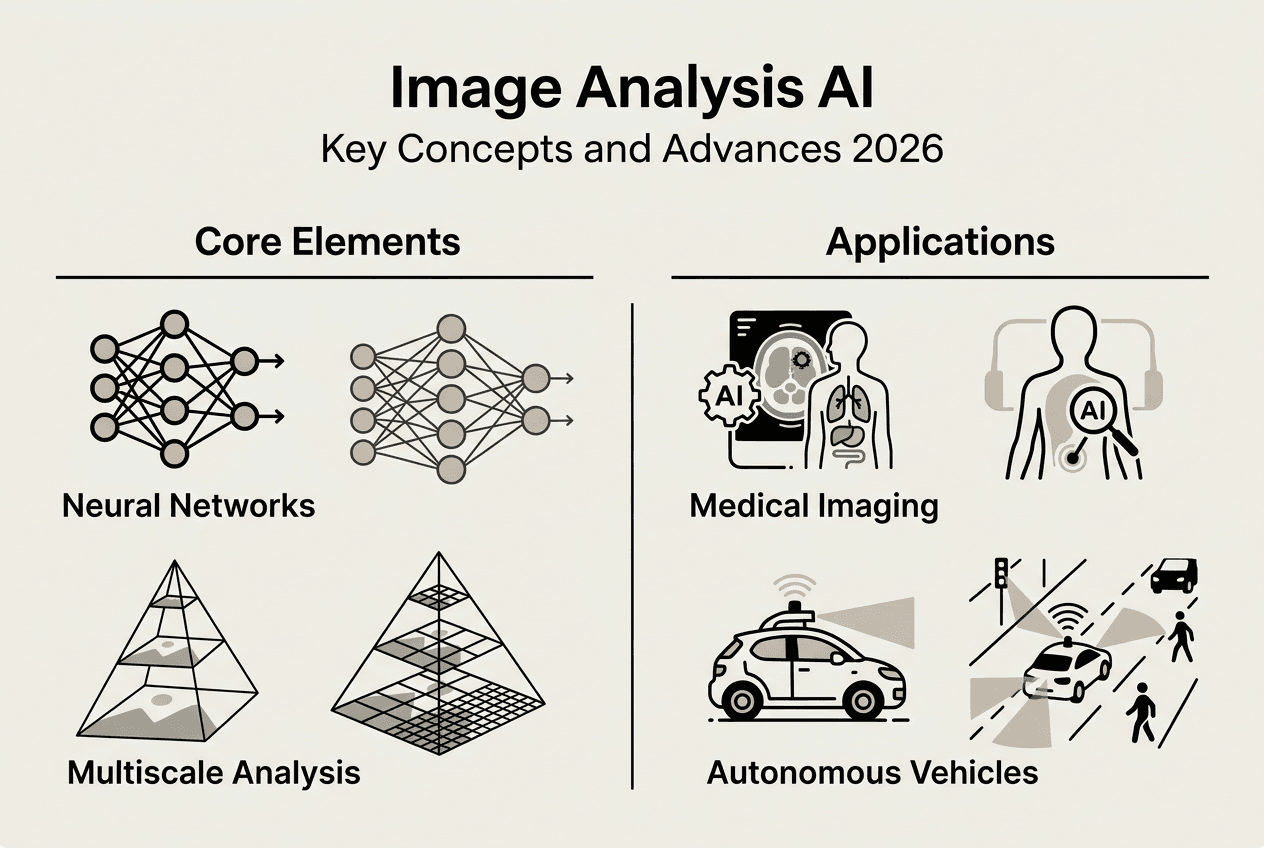

Image analysis AI has evolved far beyond simple pattern recognition, now powering critical decisions in healthcare diagnostics, autonomous vehicles, and industrial quality control. Deep learning breakthroughs enable machines to interpret visual data with unprecedented accuracy, extracting meaningful insights from complex imagery across diverse applications. This guide explores the core technologies driving modern image analysis AI, from neural network architectures to real-world implementation challenges. You will learn how these systems work, where they excel, what limitations they face, and how to apply them effectively in professional contexts.

Table of Contents

- Understanding Image Analysis AI Fundamentals

- Advancements In Deep Learning For Medical Image Segmentation

- Challenges In Image Analysis AI: Edge Cases And Functional Insufficiencies

- Practical Applications And Future Directions Of Image Analysis AI

Key takeaways

| Point | Details |

|---|---|

| Core technology | Image analysis AI uses deep learning neural networks to automatically extract meaningful information from visual data |

| Medical breakthroughs | Deep learning has revolutionized medical image segmentation, enabling accurate identification of organs, tissues, and pathologies |

| Edge case challenges | Rare, unexpected events pose significant reliability risks in safety-critical applications like autonomous driving |

| Industry adoption | Healthcare, automotive, manufacturing, agriculture, and security sectors lead in deploying image analysis AI solutions |

| Regulatory frameworks | Standards like ISO 21448 address functional insufficiencies and hazard mitigation in AI-driven systems |

Understanding image analysis AI fundamentals

Image analysis AI represents the automated extraction of meaningful information from visual data using algorithms and neural networks. These systems process images through distinct stages: acquisition captures raw visual input, preprocessing enhances quality and normalizes formats, segmentation partitions images into regions of interest, feature extraction identifies relevant patterns, and classification assigns labels or predictions. This pipeline transforms pixels into actionable insights across applications ranging from tumor detection to defect identification in manufacturing.

Encoder-decoder neural network architectures form the backbone of modern image analysis systems. The encoder compresses input images into compact representations, capturing essential features while reducing dimensionality. A bottleneck layer holds this condensed information before the decoder reconstructs or interprets it for specific tasks. Skip connections bridge encoder and decoder layers, preserving spatial details that might otherwise be lost during compression. This architecture enables systems to balance global context understanding with fine-grained detail recognition.

Encoder choice significantly impacts performance on vision-centric tasks such as document understanding, spatial reasoning, and fine-grained recognition. Different encoder designs optimize for specific image characteristics: some excel at capturing texture patterns, others prioritize geometric relationships or color distributions. Task requirements should guide encoder selection, matching architectural strengths to application demands. Medical imaging might prioritize encoders trained on similar anatomical structures, while manufacturing inspection systems benefit from encoders optimized for edge detection and surface analysis.

Multiscale analysis allows networks to process images at various resolutions simultaneously, capturing both broad contextual information and minute details. Attention mechanisms further refine this capability by learning which image regions deserve computational focus, mimicking human visual attention. These components enable networks to handle complex scenes where relevant features appear at different scales or locations. A chest X-ray analysis system might use multiscale processing to detect both large anatomical structures and subtle nodules simultaneously.

Pro Tip: Match your encoder architecture to your specific application domain by evaluating pretrained models on representative samples from your dataset, prioritizing those demonstrating strong performance on similar visual characteristics and task requirements.

Advancements in deep learning for medical image segmentation

Before the advent of deep learning, segmentation methods evolved along two paradigms: purely image-based approaches and model-based approaches. Image-based methods relied on pixel intensity thresholds, edge detection, and region growing algorithms that struggled with noise and variability. Model-based approaches incorporated prior knowledge about organ shapes and tissue properties but required extensive manual tuning and often failed when anatomical variations exceeded predefined parameters. These traditional techniques demanded significant expert intervention and produced inconsistent results across different imaging conditions.

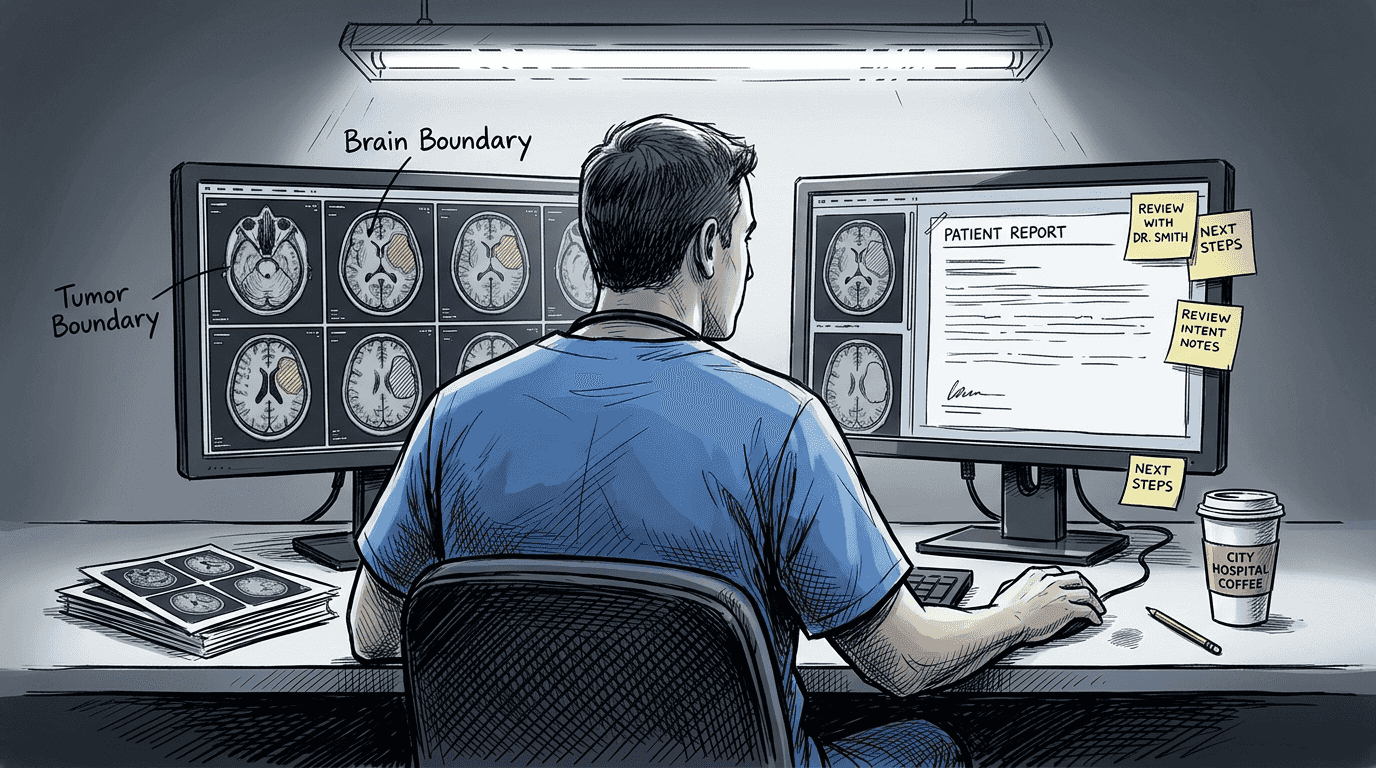

Deep learning architectures transformed this landscape by automatically learning hierarchical feature representations directly from training data. Medical image segmentation has advanced rapidly, driven largely by deep learning, enabling accurate and efficient segmentation of organs, tissues, cells, and pathologies across diverse modalities. Convolutional neural networks eliminated the need for manual feature engineering, discovering optimal patterns through exposure to thousands of annotated examples. This shift dramatically improved accuracy while reducing the time required for segmentation tasks from hours to seconds.

Key segmentation targets now span the full spectrum of medical imaging needs:

- Organs including brain structures, liver, kidneys, heart chambers, and lungs across MRI and CT scans

- Tissue types such as gray matter, white matter, tumor margins, and vascular networks

- Cellular structures in microscopy images for pathology and research applications

- Pathological regions identifying lesions, tumors, hemorrhages, and abnormal tissue formations

- Cross-modality applications handling X-ray, ultrasound, PET, and combined imaging protocols

| Aspect | Traditional Methods | Deep Learning Methods |

|---|---|---|

| Feature extraction | Manual design by experts | Automatic learning from data |

| Accuracy | Moderate, inconsistent | High, reproducible |

| Processing time | Hours per scan | Seconds per scan |

| Adaptability | Requires retuning for variations | Generalizes across similar cases |

| Training requirements | Minimal data, extensive rules | Large annotated datasets |

The clinical impact extends beyond speed improvements. Deep learning models detect subtle patterns human observers might miss, supporting earlier disease detection and more precise treatment planning. AI in dental radiology demonstrates how these technologies enhance diagnostic capabilities across specialties. Planmeca Romexis image segmentation exemplifies practical implementations reducing radiation exposure while maintaining diagnostic quality.

Pro Tip: Leverage pretrained medical imaging models and transfer learning to accelerate development timelines, fine-tuning existing networks on your specific anatomical targets rather than training from scratch, which reduces data requirements and improves performance.

Challenges in image analysis AI: edge cases and functional insufficiencies

Edge cases in autonomous driving are rare, unexpected events that disproportionately contribute to failures. These scenarios fall outside normal operating parameters: a pedestrian wearing reflective clothing that confuses sensor systems, unusual weather conditions creating sensor artifacts, or road configurations absent from training data. While individually uncommon, their collective impact on system reliability proves substantial. A single unhandled edge case can trigger catastrophic failures in safety-critical applications.

The long tail problem describes the power law distribution of scenarios, where a few common scenarios account for most driving miles, while many rare scenarios occur infrequently. Training data naturally overrepresents frequent situations, leaving systems vulnerable to rare events. An autonomous vehicle might encounter millions of standard intersections during development but only a handful of construction zones with temporary signage. This imbalance creates blind spots where system behavior becomes unpredictable.

Main challenges posed by edge cases include:

- Detection difficulty as anomalous situations lack clear defining patterns for recognition algorithms

- Data scarcity making it nearly impossible to collect representative training examples for every rare scenario

- Testing limitations where validation processes cannot feasibly cover the infinite variety of real-world edge cases

- Combinatorial explosion as multiple unusual factors interact, creating scenarios exponentially more complex than individual edge cases

- Safety implications where even low-probability failures carry unacceptable consequences in critical applications

ISO 21448 (SOTIF) addresses hazards arising from functional insufficiencies, where a system works as designed but doesn't account for real-world scenarios. This standard provides frameworks for identifying, analyzing, and mitigating risks stemming from specification gaps rather than component failures. It requires systematic evaluation of known and unknown unsafe scenarios, establishing processes to discover edge cases before deployment. Compliance demands rigorous documentation of system limitations and operational boundaries.

Companies implementing robust edge case management follow structured approaches:

- Systematic scenario mining analyzes operational data to identify unusual events and near-miss incidents

- Simulation environments generate synthetic edge cases by combining rare conditions and testing system responses

- Adversarial testing deliberately creates challenging scenarios designed to expose system weaknesses

- Continuous monitoring collects real-world performance data to detect emerging edge cases post-deployment

- Iterative refinement updates models with new edge case examples as they are discovered and validated

- Safety envelope definition establishes clear operational boundaries where system performance is guaranteed

Statistical analyses reveal that while edge cases represent less than 1% of total operating scenarios, they account for over 60% of system failures in AI-driven applications, highlighting the disproportionate impact of rare events on overall reliability and the critical importance of comprehensive edge case management strategies.

Drone services safety regulations illustrate how industries beyond automotive address similar challenges, establishing operational constraints that limit exposure to unhandled edge cases while technology matures.

Practical applications and future directions of image analysis AI

Image analysis AI deployment spans industries where visual data drives critical decisions. Healthcare organizations use these systems for diagnostic imaging, pathology slide analysis, and surgical planning. Autonomous vehicle manufacturers rely on real-time image interpretation for navigation and obstacle avoidance. Manufacturing facilities implement quality control systems detecting product defects invisible to human inspectors. Agricultural operations monitor crop health and optimize resource allocation through aerial imagery analysis. Security applications range from facial recognition to anomaly detection in surveillance footage.

Application types demonstrate the technology's versatility:

- Anomaly detection identifies deviations from normal patterns in medical scans, production lines, or infrastructure monitoring

- Quality control automates inspection processes, flagging defects in manufactured components with superhuman consistency

- Diagnostics supports clinical decision-making by highlighting potential pathologies and quantifying disease progression

- Spatial reasoning enables robots and autonomous systems to navigate complex environments and manipulate objects

- Content understanding extracts information from documents, signs, and visual media for indexing and retrieval

| Industry | Use Case | Typical AI Techniques |

|---|---|---|

| Healthcare | Tumor segmentation, disease classification | U-Net architectures, ResNet encoders |

| Automotive | Object detection, lane recognition | YOLO, Faster R-CNN, semantic segmentation |

| Manufacturing | Defect detection, assembly verification | Convolutional networks, anomaly detection |

| Agriculture | Crop monitoring, yield prediction | Multispectral analysis, temporal modeling |

| Security | Facial recognition, threat detection | Siamese networks, attention mechanisms |

| Retail | Inventory tracking, customer analytics | Object tracking, pose estimation |

Emerging technologies push capabilities beyond current limitations. Attention mechanisms allow networks to focus computational resources on image regions most relevant to specific tasks, improving efficiency and interpretability. Integration of prior knowledge combines learned patterns with domain expertise, reducing data requirements and improving generalization. Multiscale networks process images at multiple resolutions simultaneously, capturing both fine details and broad context. Recent progress in medical image segmentation traces key developments driving these innovations.

Ongoing research frontiers target persistent challenges. Generalization across domains remains difficult, with models trained on one dataset often failing on slightly different inputs. Edge case handling continues improving through adversarial training and synthetic data generation. Explainability research aims to make model decisions transparent and verifiable, critical for regulated industries. Few-shot learning techniques reduce the massive data requirements that currently limit deployment in specialized applications.

Best practices for implementing image analysis AI projects:

- Establish clear success metrics aligned with business objectives before selecting models or architectures

- Curate representative training data covering expected operational variability and edge cases

- Implement rigorous validation protocols testing performance on held-out data and real-world scenarios

- Document model limitations and operational boundaries to prevent deployment beyond validated capabilities

- Design human-in-the-loop workflows for high-stakes decisions where AI provides recommendations rather than autonomous control

- Plan infrastructure for model versioning, monitoring, and updates as data distributions evolve

- Conduct regular audits evaluating fairness, bias, and performance across demographic groups and use cases

Pro Tip: Prioritize continuous model evaluation and adaptation as operational data accumulates, treating deployment as the beginning of an iterative improvement cycle rather than a final product, with automated monitoring detecting performance degradation and triggering retraining workflows.

Explore AI-powered solutions with Sofia🤖

Implementing image analysis AI projects requires powerful tools that streamline complex workflows from data preparation through deployment. Sofia AI personal assistant platform offers professionals access to over 60 state-of-the-art AI models, including advanced vision capabilities for document analysis and image interpretation. The platform integrates cutting-edge technologies into a unified interface, enabling researchers and developers to experiment with different approaches without managing multiple vendor relationships.

Sofia🤖 supports team collaboration on AI projects with enterprise-grade security, GDPR compliance, and custom AI profiles tailored to specific use cases. Real-time streaming responses and document analysis features accelerate development cycles, while flexible pricing accommodates individual researchers through large enterprise deployments. Whether you're prototyping medical imaging algorithms or deploying production quality control systems, Sofia🤖 provides the infrastructure to transform image analysis concepts into operational solutions.

FAQ

What is the role of deep learning in image analysis AI?

Deep learning enables complex feature extraction and accurate segmentation, surpassing traditional methods in many tasks. It forms the core of most modern image analysis AI systems by automatically learning hierarchical representations from training data. Neural networks discover optimal patterns without manual feature engineering, dramatically improving accuracy while reducing processing time from hours to seconds.

How do image analysis AI systems handle rare or unexpected events?

Rare events, called edge cases, can cause system failures if unhandled. Standards like ISO 21448 (SOTIF) help guide safety and mitigation strategies for these scenarios by establishing frameworks for identifying functional insufficiencies. Companies implement systematic scenario mining, adversarial testing, and continuous monitoring to discover and address edge cases before they cause operational failures.

What industries benefit most from image analysis AI?

Healthcare, automotive, manufacturing, agriculture, and security lead in applying image analysis AI. Applications range from diagnostics and quality inspection to autonomous navigation and monitoring. Each industry leverages the technology differently: healthcare focuses on medical imaging interpretation, manufacturing emphasizes defect detection, while agriculture uses aerial imagery for crop monitoring and resource optimization.

What are key considerations when implementing image analysis AI projects?

Focus on data quality and representativeness to handle operational variability. Choose appropriate models and validate on edge cases to ensure robust performance across real-world scenarios. Plan for ongoing model updates as environments change, treating deployment as an iterative improvement cycle. Establish clear success metrics, document limitations, and implement human-in-the-loop workflows for high-stakes decisions where autonomous control carries unacceptable risks.