Implementing speech recognition in production AI systems presents a unique challenge: balancing accuracy, latency, and compute resources while meeting user expectations for real-time interaction. Recent advances in model architectures have introduced new options for streaming and batch processing, giving developers more flexibility than ever. This guide walks you through the key concepts, preparation steps, model selection criteria, implementation workflows, and evaluation strategies you need to deploy robust ASR systems in 2026.

Table of Contents

- Understanding Speech Recognition Technologies: Core Concepts And Tasks

- Preparing For ASR Implementation: Tools, Data, And Architecture Choices

- Step-By-Step Guide To Implementing And Integrating Speech Recognition Systems

- Evaluating Speech Recognition Performance: Benchmarks, Metrics, And Troubleshooting

- Explore Sofia🤖: Your AI-Powered Personal Assistant

Key takeaways

| Point | Details |

|---|---|

| Speech recognition systems vary widely | Architecture and application needs determine whether you choose streaming or offline models. |

| Model selection balances accuracy and speed | Choosing the right model requires understanding trade-offs between compute cost and performance. |

| Benchmarking ensures production readiness | Evaluation with diverse datasets and metrics validates system reliability before deployment. |

| Streaming and offline ASR have distinct strengths | Real-time applications demand different architectures than batch transcription workflows. |

Understanding speech recognition technologies: core concepts and tasks

Automatic Speech Recognition (ASR) maps a speech waveform to a string of words, converting acoustic signals into text that applications can process. This foundational capability powers virtual assistants, live captions, voice commands, and conversational AI interfaces. Understanding the distinctions between ASR and related tasks helps you scope your implementation correctly.

It's crucial to distinguish ASR from related tasks like voice activity detection and keyword spotting. Voice activity detection identifies when speech is present in an audio stream, while keyword spotting listens for specific trigger words. Full ASR transcribes open-ended speech into complete sentences. Each task requires different model architectures and training approaches.

ASR tasks vary based on vocabulary size, with smaller vocabularies like digit recognition being easier than open-ended conversational transcription. A system recognizing ten digits faces far less complexity than one handling unrestricted natural language. Your vocabulary scope directly impacts model selection, training data requirements, and achievable accuracy.

Modern ASR systems rely on several model families. Encoder-decoder architectures process audio through an encoder that extracts features, then a decoder that generates text. Self-supervised models like Wav2Vec2 learn representations from unlabeled audio before fine-tuning on transcribed data. Transformer-based models have largely replaced older recurrent networks, offering better parallelization and accuracy. Each architecture brings different strengths for voice recognition fundamentals in production environments.

Common ASR tasks include:

- Full sentence transcription for meetings, interviews, and content creation

- Real-time captioning for accessibility and live broadcasts

- Voice commands for smart devices and automotive interfaces

- Call center analytics and quality monitoring

- Medical dictation and clinical documentation

Preparing for ASR implementation: tools, data, and architecture choices

Successful ASR deployment starts with selecting the right development tools and frameworks. In 2026, popular options include Hugging Face Transformers for model access, PyTorch and TensorFlow for custom training, and specialized libraries like ESPnet and NeMo for speech-specific workflows. Cloud platforms offer managed ASR services, while edge deployment requires optimized inference engines like ONNX Runtime or TensorFlow Lite.

Modern ASR systems rely on self-supervised pretraining and Language Model fusion to balance accuracy, latency, and compute costs. Self-supervised learning allows models to learn from vast amounts of unlabeled audio, reducing dependence on expensive human transcription. Language model fusion during decoding improves accuracy by incorporating linguistic context and domain-specific vocabulary.

Architecture choice fundamentally shapes system behavior. RNN-T/Transducers solved streaming limitations of attention models for real-time prediction, enabling word-by-word output as audio arrives. Offline architectures like attention-based encoder-decoders process complete utterances, achieving higher accuracy at the cost of latency. Your application requirements determine which approach fits best.

Hardware considerations span from edge devices to cloud infrastructure. Edge deployment on mobile or embedded processors demands model compression techniques like quantization and pruning. Cloud deployments can leverage GPU acceleration for batch processing or real-time streaming at scale. Consider your latency budget, cost constraints, and privacy requirements when choosing deployment targets.

| Architecture | Latency | Complexity | Best Use Cases |

|---|---|---|---|

| RNN-T/Transducer | Low (streaming) | Medium | Live captions, voice assistants, real-time commands |

| Attention Encoder-Decoder | Medium-High (offline) | High | Batch transcription, high-accuracy documentation |

| CTC-based | Low-Medium | Low-Medium | Resource-constrained edge devices, keyword spotting |

| Hybrid (streaming + LM) | Medium | High | Production systems balancing speed and accuracy |

Prerequisites for implementation include:

- Training data: thousands of hours of transcribed audio for supervised fine-tuning

- Compute resources: GPUs for training, CPUs or specialized accelerators for inference

- Audio preprocessing pipeline: resampling, normalization, feature extraction

- Evaluation datasets: diverse test sets covering accents, noise conditions, domains

Pro Tip: Choose hardware that balances cost and performance for your targeted ASR workload. A single high-end GPU can handle dozens of concurrent streaming sessions, while edge deployment may require model distillation to fit within mobile processor constraints.

Step-by-step guide to implementing and integrating speech recognition systems

Deploying ASR in production follows a structured workflow from model selection through runtime optimization. This systematic approach ensures you address accuracy, latency, and integration requirements before going live.

-

Select a base model aligned with your task. For English-only applications, Whisper-derived encoders fine-tuned for English improve accuracy but may reduce multilingual coverage. Multilingual systems should start with models pretrained on diverse languages. Evaluate model size against your compute budget.

-

Set up your development environment with necessary frameworks and dependencies. Install PyTorch or TensorFlow, audio processing libraries like librosa or torchaudio, and evaluation tools. Configure GPU drivers if using accelerated training. Establish version control and experiment tracking from the start.

-

Prepare your training data by collecting domain-specific audio and transcriptions. Clean transcripts to remove formatting inconsistencies, normalize punctuation, and handle special tokens. Split data into training, validation, and test sets, ensuring no speaker overlap between sets to prevent overfitting.

-

Fine-tune the acoustic model on your domain data. Start with a pretrained checkpoint to leverage transfer learning. Adjust learning rate, batch size, and training duration based on dataset size. Monitor validation metrics to detect overfitting early. Use data augmentation like speed perturbation and noise injection to improve robustness.

-

Choose a decoding strategy that fits your latency and accuracy requirements. Greedy decoding offers the fastest inference but lower accuracy. Beam search explores multiple hypotheses, improving quality at higher compute cost. Language model fusion during decoding can boost accuracy by 10-20% for domain-specific vocabulary.

-

Integrate the model into your application using appropriate APIs. For streaming applications, implement chunked audio processing with overlap to avoid cutting words at boundaries. Handle silence detection and endpoint detection to segment continuous audio into utterances. Build retry logic for network failures in cloud-based deployments.

-

Optimize runtime performance through quantization, pruning, or knowledge distillation. INT8 quantization can reduce model size by 75% with minimal accuracy loss. Profile your inference pipeline to identify bottlenecks in audio preprocessing, model execution, or post-processing.

-

Deploy with monitoring and logging to track real-world performance. Collect metrics on latency, throughput, and error rates. Sample predictions for quality review. Implement A/B testing to compare model versions before full rollout.

Pro Tip: Tune decoding parameters to balance speed and accuracy for production. Start with beam width of 5 and language model weight of 0.5, then adjust based on profiling results. Smaller beam widths reduce latency but may miss optimal transcriptions.

Evaluating speech recognition performance: benchmarks, metrics, and troubleshooting

Rigorous evaluation separates research prototypes from production-ready systems. Multiple metrics and diverse test sets reveal how models perform across real-world conditions.

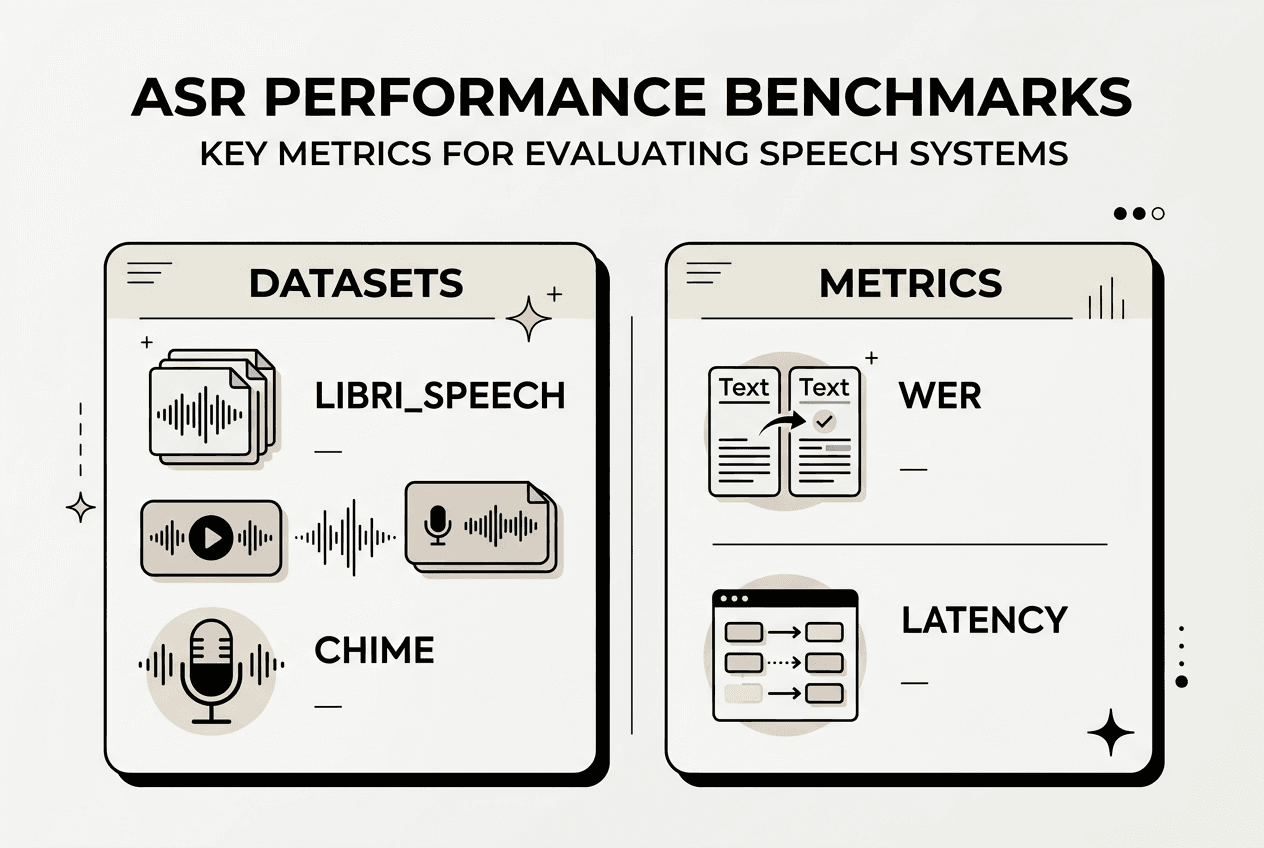

The Open ASR Leaderboard provides standardized benchmarks with multiple datasets and metrics, highlighting no single dataset or metric suffices for evaluation. Word error rate (WER) measures the percentage of words incorrectly transcribed, while character error rate (CER) captures finer-grained mistakes. Real-time factor (RTFx) quantifies processing speed: an RTFx of 0.5 means the system processes audio twice as fast as real-time.

Testing on diverse datasets exposes model weaknesses. Clean speech benchmarks like LibriSpeech show best-case accuracy, while noisy datasets like CHiME reveal robustness to background sounds. Accented speech datasets test generalization across dialects. Domain-specific test sets validate performance on technical vocabulary, medical terms, or industry jargon.

Granite-Speech-3.3 and Distil-Whisper demonstrate strong performance in various conditions; Wav2Vec2 shows limitations for production. Distil-Whisper achieves accuracy close to full Whisper models at 6x faster inference, making it ideal for real-time applications. Granite-Speech-3.3 excels in noisy environments and multilingual scenarios. Wav2Vec2 requires substantial fine-tuning and struggles with out-of-domain audio.

| Model | WER (LibriSpeech) | RTFx | Strengths | Weaknesses |

|---|---|---|---|---|

| Whisper Large | 2.5% | 0.15 | Exceptional accuracy, multilingual | High latency, large model size |

| Distil-Whisper | 3.1% | 0.9 | Fast inference, good accuracy | Slightly lower quality than full model |

| Granite-Speech-3.3 | 3.8% | 0.6 | Noise robustness, multilingual | Moderate compute requirements |

| Wav2Vec2 Base | 6.2% | 1.2 | Fast, small footprint | Requires extensive fine-tuning |

Common troubleshooting tips for ASR deployment:

- High WER on specific speakers: expand training data diversity to cover more accents and speaking styles

- Increased latency under load: implement request batching and asynchronous processing queues

- Model hallucinations on silence: improve voice activity detection to filter non-speech segments

- Poor performance on domain terms: fine-tune with in-domain data and custom language models

- Memory spikes during inference: reduce batch size or enable gradient checkpointing for large models

Model hallucination occurs when systems generate plausible but incorrect text, especially during silence or background noise. Combining ASR with robust voice activity detection mitigates this issue. Accuracy-speed trade-offs require careful tuning: faster models sacrifice quality, while high-accuracy models demand more compute. Profile your specific workload to find the optimal balance for your application.

Explore Sofia🤖: your AI-powered personal assistant

Implementing robust speech recognition opens new possibilities for intuitive AI interaction. Sofia leverages advanced speech recognition technologies to deliver natural voice chat, enabling seamless conversations with over 60 state-of-the-art AI models. Whether you're building conversational interfaces, analyzing spoken content, or streamlining productivity workflows, Sofia demonstrates practical applications of the ASR techniques covered in this guide.

Developers integrating speech capabilities into AI applications benefit from Sofia's real-time streaming responses and document analysis features. The platform handles the complexity of model orchestration, security, and scaling, letting you focus on building user experiences. Explore how Sofia combines speech recognition with enterprise-grade AI to enhance productivity across development, content creation, and business workflows.

Frequently asked questions

What is the best architecture for real-time speech recognition in 2026?

RNN-T and Transducer architectures excel for real-time applications due to their streaming capabilities and low latency. They output words incrementally as audio arrives, unlike attention models that require complete utterances. For production systems, hybrid approaches combining streaming encoders with language model fusion offer the best balance of speed and accuracy.

How do I balance accuracy and latency in ASR systems?

Start by profiling your application's latency budget and minimum acceptable WER. Use model distillation or quantization to reduce inference time while preserving accuracy. Adjust beam search width and language model weight during decoding to fine-tune the accuracy-speed trade-off. Test multiple configurations with real-world audio to find the optimal balance.

Which datasets are recommended for ASR evaluation?

Use LibriSpeech for clean speech benchmarks, CHiME for noisy conditions, and Common Voice for accent diversity. Domain-specific datasets like CORAAL for African American English or medical transcription corpora validate specialized applications. Testing across multiple datasets reveals model strengths and weaknesses better than any single benchmark.

How do I handle multilingual speech recognition challenges?

Choose models pretrained on multilingual data like Whisper or MMS. Fine-tune on your target languages with balanced training data to avoid bias toward high-resource languages. Implement language identification to route audio to language-specific models when accuracy matters more than simplicity. Test thoroughly on code-switching scenarios if users mix languages.

What are common pitfalls to avoid when deploying ASR in production?

Neglecting voice activity detection leads to hallucinations on silence. Insufficient testing on diverse accents and noise conditions causes poor real-world performance. Ignoring latency profiling under load results in degraded user experience. Failing to monitor production metrics prevents catching accuracy regressions. Always validate with representative audio before full deployment.