Many professionals believe that bigger AI models always deliver better results, but real-world evaluations reveal that model selection depends on your specific use case and deployment constraints. Understanding the different types of AI models and their practical applications can transform how you approach content creation, development workflows, and digital marketing strategies. This guide breaks down the essential AI model categories, architectural considerations, and evaluation methods that matter for professionals seeking genuine productivity gains in 2026. You'll learn which models excel at specific tasks, how to assess their real-world performance, and practical strategies for integrating them into your workflows.

Table of Contents

- Understanding The Main Types Of AI Models In 2026

- The Evolving Landscape Of AI Model Architectures And Performance

- Evaluating AI Model Effectiveness Beyond Benchmarks

- Integrating AI Models Effectively For Productivity Enhancement

- Explore Sofia: Your AI-Powered Personal Assistant

- FAQ About AI Models Explained

Key takeaways

| Point | Details |

|---|---|

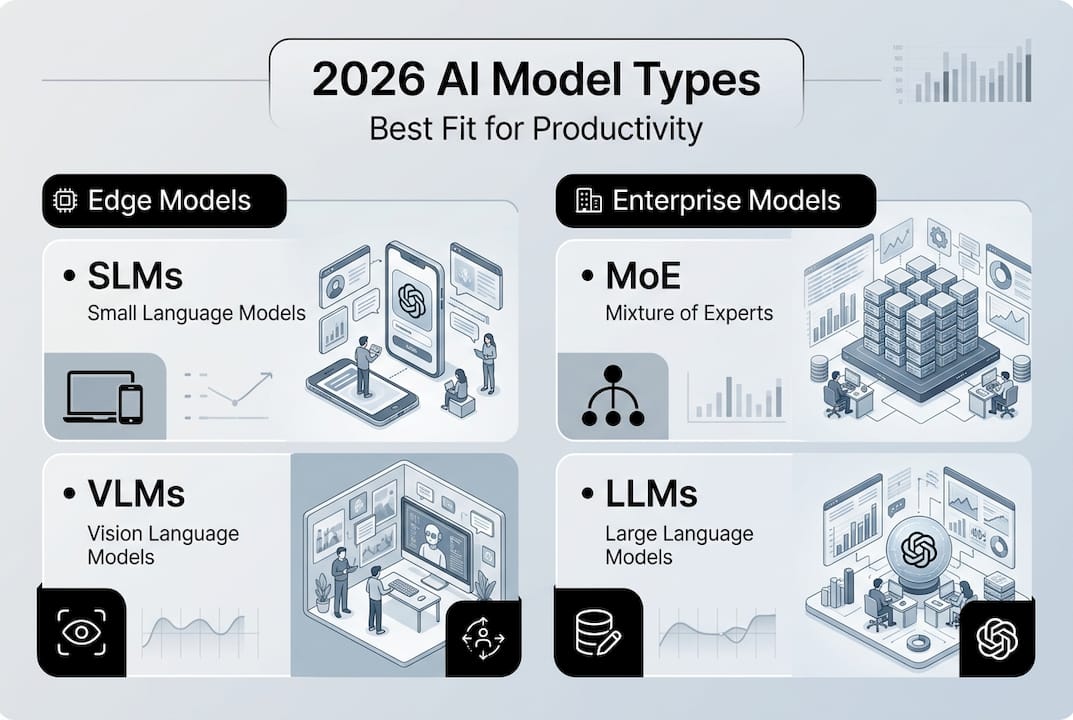

| Model types serve distinct roles | Small Language Models and Vision Language Models excel at edge deployment and content tasks, while Mixture of Experts and Large Language Models handle complex reasoning |

| Architecture impacts deployment | Dense models offer simplicity for practitioners, while MoE architectures enable massive scaling but introduce routing overhead and inference complexity |

| Real-world testing beats benchmarks | Standard benchmarks like MMLU saturate quickly, making human preference evaluations and domain-specific tests more valuable for assessing practical effectiveness |

| Integration strategy matters | Chaining specialized models in agent systems delivers superior results for multi-step workflows compared to relying on a single general-purpose model |

Understanding the main types of AI models in 2026

The AI model landscape has evolved beyond simple categorization into a spectrum of specialized architectures designed for specific tasks. Knowing which model type fits your needs saves time, reduces costs, and improves output quality across development and marketing workflows.

Small Language Models (SLMs) and Vision Language Models (VLMs) represent the practical workhorses for edge deployment and content tasks. SLMs run efficiently on devices with limited computational resources, making them ideal for real-time applications like chatbots, email drafting, and quick content summarization. VLMs combine visual and textual understanding, enabling professionals to analyze screenshots, extract data from infographics, and generate image descriptions for accessibility. These models power voice recognition AI models that transcribe meetings and image analysis AI models that categorize visual assets.

Mixture of Experts (MoE) and Large Language Models (LLMs) tackle the complex reasoning tasks that demand deeper contextual understanding. MoE architectures activate only relevant subsets of parameters for each query, allowing trillion-parameter models to run efficiently. LLMs excel at long-form content generation, technical documentation, and strategic analysis where nuanced comprehension matters. Digital marketers use these models for campaign strategy development, while developers rely on them for code review and architecture planning.

Agent systems represent the cutting edge by chaining specialized models in sequences like VLM to LRM (Large Reasoning Model) to LAM (Large Action Model). This approach breaks complex workflows into discrete steps, with each model handling its area of expertise. A content creation agent might use a VLM to analyze competitor visuals, an LRM to strategize positioning, and an LAM to generate the final copy and schedule publication.

Practical applications span industries:

- Content creators use VLMs for visual asset management and automated alt text generation

- AI developers deploy SLMs for client-side features that protect user privacy

- Marketing teams leverage LLMs for audience research and personalized campaign messaging

- Product managers chain models to automate user feedback analysis and feature prioritization

Pro Tip: Match model size to task complexity. Use SLMs for straightforward classification and summarization, reserve LLMs for tasks requiring deep context like strategic planning or technical writing, and consider agent systems when workflows involve multiple distinct reasoning steps.

The evolving landscape of AI model architectures and performance

Architectural choices fundamentally determine how well an AI model scales, handles context, and performs in production environments. Understanding these trade-offs helps you select models that align with your infrastructure capabilities and performance requirements.

Transformers dominate the current landscape due to their proven scaling properties, but their quadratic attention mechanism creates challenges for processing long documents or conversations. Alternative architectures like State Space Models (SSMs) promise linear scaling for extended contexts, yet Transformers maintain their lead through extensive optimization and ecosystem support. The attention mechanism's computational cost grows exponentially with input length, making 100,000-token contexts expensive to process even on modern hardware.

Mixture of Experts architectures offer a compelling solution for scaling to trillion-parameter models by activating only a fraction of parameters per query. This "sparse" approach theoretically enables massive capacity without proportional compute costs. However, system overheads from routing decisions and expert loading erode these gains during low-batch inference scenarios common in interactive applications. The routing mechanism adds latency as the model decides which experts to activate, and memory bandwidth constraints emerge when frequently switching between expert weights.

Dense models remain the practical choice for many practitioners despite their higher parameter counts per active computation. They offer simpler deployment pipelines, more predictable latency profiles, and easier debugging when issues arise. The straightforward architecture means fewer moving parts that can fail or introduce unexpected behavior in production environments. This simplicity proves valuable when building real-time AI applications where consistent performance matters more than theoretical efficiency.

| Architecture | Strengths | Limitations | Best Use Cases |

|---|---|---|---|

| Transformer (Dense) | Proven scaling, robust ecosystem, predictable performance | Quadratic attention cost, memory intensive for long contexts | General-purpose tasks, established workflows, production stability |

| Mixture of Experts | Massive parameter scaling, efficient per-query compute | Routing overhead, complex deployment, memory bandwidth constraints | Large-scale batch processing, research applications |

| State Space Models | Linear scaling for long contexts, efficient inference | Less mature ecosystem, limited proven applications | Document analysis, long-form content processing |

Pro Tip: For production deployments prioritizing reliability and consistent latency, start with dense Transformer models from established providers. Experiment with MoE or SSM architectures only when you have specific scaling challenges that justify the added complexity and when your infrastructure team can handle the operational overhead.

Evaluating AI model effectiveness beyond benchmarks

Standard benchmarks have lost their predictive power as models increasingly saturate popular tests like MMLU, making real-world evaluation essential for assessing practical utility. The gap between benchmark scores and actual performance in professional contexts has widened as models optimize for test datasets rather than generalizable capabilities.

Popular benchmarks suffer from several critical limitations that reduce their value for decision-making. Models memorize common test questions during training, inflating scores without improving genuine reasoning ability. Multiple-choice formats fail to capture the nuanced judgment required for open-ended tasks like content creation or strategic analysis. Benchmark saturation means that small score differences between top models rarely translate to noticeable quality gaps in practice.

Real-world evaluation approaches provide more actionable insights:

- SWE-Bench tests coding models on actual GitHub issues, measuring their ability to generate working patches for real bugs

- Human preference evaluations compare model outputs side-by-side for subjective quality factors like tone, clarity, and usefulness

- Domain-specific assessments create custom test sets reflecting your actual use cases and success criteria

- Production metrics track downstream business outcomes like user engagement, conversion rates, or time saved

Conducting meaningful model assessments requires a structured approach:

- Define success metrics aligned with your business objectives, whether that's content quality, response accuracy, or task completion rate

- Create a representative test set sampling the diversity and difficulty of real tasks your team handles

- Establish baseline performance using your current solution or manual processes to quantify improvement

- Run blind comparisons where evaluators assess outputs without knowing which model generated them

- Measure both quality and efficiency factors including latency, cost per query, and failure rates

- Iterate based on findings, adjusting prompts, model selection, or integration patterns

"The most valuable model evaluations happen in production environments where real users interact with outputs. Synthetic benchmarks provide a starting point, but human judgment on domain-specific tasks reveals which models actually deliver value." — Industry evaluation research

This approach helps teams working on AI content creation techniques or speech recognition implementation make informed model choices based on actual performance rather than marketing claims.

Pro Tip: Build a small but high-quality evaluation set of 50 to 100 examples representing your most challenging and important use cases. Manually review model outputs on this set monthly to catch quality regressions before they impact users, and use the insights to refine your prompts and integration patterns.

Integrating AI models effectively for productivity enhancement

Successful AI integration requires matching model capabilities to specific workflow stages rather than applying a single model to every task. Strategic deployment maximizes quality while controlling costs and latency across your operations.

Small Language Models and Vision Language Models deliver the best results for edge content tasks requiring fast response times. Deploy SLMs for real-time features like autocomplete, quick summarization, and classification tasks where millisecond latency matters. VLMs excel at processing visual content for asset management, generating image descriptions, and extracting structured data from screenshots. These lightweight models run on user devices or edge servers, reducing infrastructure costs and protecting sensitive data through local processing.

Mixture of Experts and Large Language Models handle the complex reasoning and long-form generation that defines high-value professional work. Reserve these powerful models for tasks like strategic planning, technical documentation, detailed analysis, and creative content development. The higher computational cost justifies itself when output quality directly impacts business outcomes. Batch similar requests together to improve throughput and reduce per-query expenses.

Chaining specialized models in agent systems produces superior results for multi-step workflows compared to monolithic approaches. A content marketing workflow might use a VLM to analyze competitor visuals, an LRM to develop positioning strategy, and an LAM to generate final copy and schedule distribution. Each model focuses on its strength, and the orchestration layer manages data flow between stages. This modular design enables you to swap individual models as better options emerge without rebuilding the entire system.

| Integration Approach | Benefits | Challenges | Recommended For |

|---|---|---|---|

| Edge SLMs/VLMs | Low latency, privacy-preserving, reduced infrastructure costs | Limited capability, requires optimization for deployment | Real-time features, device-based apps, privacy-sensitive tasks |

| Cloud LLMs/MoE | Maximum capability, handles complex reasoning, easier updates | Higher cost per query, latency from network calls | Strategic work, long-form content, detailed analysis |

| Chained Agent Systems | Specialized expertise per stage, modular architecture, optimized cost | Complex orchestration, debugging difficulty, integration overhead | Multi-step workflows, content pipelines, automated research |

Best practices for deployment and scaling:

- Start with a single well-defined use case and measure impact before expanding to additional workflows

- Implement fallback logic to handle model failures gracefully and maintain user experience

- Monitor cost per query and set budget alerts to prevent unexpected expenses from usage spikes

- Cache frequent queries to reduce redundant API calls and improve response times

- Version control your prompts and integration code to enable rollback when issues emerge

- Collect user feedback on output quality to identify improvement opportunities

These strategies prove especially valuable for teams focused on AI for business productivity or evaluating Sophea.ai alternatives for their specific requirements.

Pro Tip: Balance model complexity with business needs by calculating the value of quality improvements against increased costs. If upgrading from a smaller to larger model improves output quality by 10% but triples costs, assess whether that quality gain translates to proportional business value like higher conversion rates or time savings.

Explore Sofia: your AI-powered personal assistant

Applying the insights from this guide becomes straightforward with the right tools. Sofia integrates over 60 state-of-the-art AI models from providers like GPT-4o, Claude 4.0, and Gemini 2.5 into a single platform, letting you access SLMs, VLMs, and LLMs without managing multiple subscriptions or APIs. The platform handles the complexity of model selection and orchestration while you focus on getting work done.

Key features designed for professionals include real-time streaming responses for interactive workflows, document analysis for PDFs and images, natural voice chat with speech recognition, and team collaboration capabilities. Security remains a priority through GDPR compliance, enterprise encryption, and granular privacy controls. Flexible pricing serves individual users, teams, and large enterprises with custom AI profiles and collaboration tools.

Pro Tip: Start by identifying your most time-consuming repetitive task, whether that's drafting emails, analyzing documents, or generating content summaries. Use Sofia to automate that single workflow first, measure the time saved, then gradually expand to additional use cases as you become comfortable with the platform's capabilities.

FAQ about AI models explained

What is the difference between LLMs and MoE models?

Large Language Models use all their parameters for every query, providing consistent performance but requiring substantial compute resources. Mixture of Experts models activate only relevant parameter subsets per query, enabling trillion-parameter scaling with lower per-query costs, though routing overhead can reduce efficiency gains in low-batch scenarios.

How can I evaluate AI models for my business use case?

Create a test set of 50 to 100 examples representing your actual tasks, then compare model outputs using blind evaluation where reviewers assess quality without knowing which model generated each response. Measure both output quality and practical factors like latency, cost per query, and failure rates to make informed decisions.

What are the most practical AI models for digital marketing in 2026?

Use Vision Language Models for analyzing competitor visuals and generating image descriptions, Large Language Models for campaign strategy and long-form content creation, and Small Language Models for real-time features like chatbots and email personalization. Chain these models in agent systems for complex workflows like audience research followed by personalized content generation.

When should I use agent systems that chain multiple models?

Agent systems excel at multi-step workflows where each stage requires different expertise, such as visual analysis followed by strategic reasoning and then content generation. The modular approach lets you optimize each component independently and swap models as better options emerge without rebuilding the entire system.

How do I balance model capability with deployment costs?

Match model size to task value by using lightweight SLMs for high-volume, low-stakes tasks and reserving powerful LLMs for work where quality directly impacts business outcomes. Batch similar requests, cache frequent queries, and monitor cost per query to identify optimization opportunities without sacrificing essential capabilities.