Most people think AI text analysis is just fancy keyword spotting. It's not. Modern AI text analysis uses neural networks and large language models to interpret context, sentiment, and meaning at scale, transforming how businesses and researchers extract insights from unstructured data. Whether you're analyzing customer feedback, generating reports, or conducting market research, understanding AI text analysis unlocks productivity gains that traditional methods can't match. This guide covers the technology behind AI text analysis, its practical benefits, tool selection criteria, and implementation best practices to help you leverage these capabilities effectively.

Table of Contents

- Key takeaways

- Understanding AI text analysis: definition and core components

- How AI text analysis transforms business and research workflows

- Comparing AI text analysis tools and techniques: choosing the right approach

- Best practices and challenges in implementing AI text analysis

- Explore AI-powered solutions with Sofia🤖

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Definition and components | AI text analysis uses neural networks and large language models to interpret context, sentiment, and meaning from unstructured text. |

| Business and research benefits | The approach accelerates workflows, scales to millions of documents, and enables deeper, more actionable insights than traditional methods. |

| Key capabilities | Sentiment analysis, named entity recognition, text classification, summarization, and content generation are supported by modern AI text analysis. |

| Best practices and cautions | Always include human review to catch hallucinations and biases before acting on model outputs. |

Understanding AI text analysis: definition and core components

AI text analysis refers to automated systems that process, interpret, and extract insights from written language using artificial intelligence. Unlike basic keyword matching, AI text analysis understands context, identifies sentiment, recognizes entities, and generates human-like text based on patterns learned from massive datasets. The goal is to turn unstructured text into actionable intelligence faster and more accurately than manual review.

Natural language processing forms the foundation. NLP techniques break text into components like tokens, parts of speech, and syntactic structures. Traditional NLP uses rule-based systems and statistical models to classify text, extract entities, and perform sentiment analysis. These methods work well for structured tasks but struggle with nuance and context.

Large language models revolutionized the field. Models like GPT-4o, Claude, and Gemini learn language patterns from billions of text examples, enabling them to understand context, generate coherent responses, and perform complex reasoning tasks. Hybrid approaches combining traditional NLP with AI deliver superior reliability by leveraging rule-based precision alongside LLM flexibility. Retrieval-augmented generation enhances accuracy by grounding model outputs in verified source documents.

Neural networks power modern AI text analysis. These multi-layered architectures identify patterns across word embeddings, attention mechanisms, and transformer architectures. Training involves feeding networks massive text corpora so they learn relationships between words, phrases, and concepts. Fine-tuning adapts pre-trained models to specific domains like legal analysis or medical research.

Key capabilities include:

- Sentiment analysis to gauge emotional tone in customer reviews or social media

- Named entity recognition to identify people, organizations, and locations

- Text classification for organizing documents by topic or intent

- Summarization to condense long reports into key points

- Content generation for drafting emails, reports, or marketing copy

Pro Tip: Never deploy AI text analysis outputs without human review. Models can hallucinate facts, perpetuate biases, or miss critical nuances that domain experts catch instantly. Treat AI as an assistant, not a replacement.

For visual content needs, explore our image analysis AI guide to understand how computer vision complements text analysis in multimodal workflows.

How AI text analysis transforms business and research workflows

AI text analysis accelerates workflows by automating repetitive reading and interpretation tasks. Business professionals spend less time manually reviewing customer feedback, market reports, or competitor content. Researchers process literature reviews, survey responses, and qualitative data at unprecedented speed. The technology scales effortlessly from hundreds to millions of documents without proportional cost increases.

Primary benefits include:

- Scalability to analyze thousands of documents in minutes versus weeks of manual work

- Speed in generating insights, summaries, and reports for faster decision making

- Depth of analysis through pattern recognition across datasets too large for human review

- Consistency in applying evaluation criteria without fatigue or subjective drift

- Cost efficiency by reducing labor hours on routine analysis tasks

Customer feedback analysis exemplifies practical value. Companies collect reviews, support tickets, and survey responses across multiple channels. AI text analysis categorizes feedback by topic, identifies emerging issues, and tracks sentiment trends over time. Marketing teams use these insights to refine messaging. Product teams prioritize feature requests based on frequency and sentiment intensity.

Market research benefits from automated competitor monitoring. AI systems scan news articles, press releases, and social media to track competitor announcements, product launches, and customer reactions. Analysts receive daily summaries highlighting significant developments instead of manually searching dozens of sources.

Automated report generation saves hours weekly. AI text analysis extracts key data points from source documents, structures findings into templates, and drafts narrative summaries. Analysts review and refine outputs rather than starting from scratch. This approach works for financial reports, research summaries, and executive briefings.

To integrate AI text analysis into your workflows:

- Identify repetitive text tasks consuming significant time weekly

- Collect representative sample documents to test AI system performance

- Select tools matching your accuracy requirements and technical capabilities

- Fine-tune models on domain-specific data for improved relevance

- Establish human review protocols to catch errors and validate outputs

- Monitor performance metrics and adjust prompts or training data accordingly

Researchers should leverage benchmarks like MTEB and LongBench to evaluate model performance on tasks similar to their use cases. Fine-tuning on domain corpora improves accuracy for specialized terminology and context.

Real-time processing capabilities enable immediate responses. Customer service teams use AI to suggest replies during live chat sessions. Sales teams receive instant summaries of prospect communications. Learn more about real-time AI advantages for competitive positioning.

Content generation extends beyond analysis. AI drafts blog posts, product descriptions, and email campaigns based on brief prompts. Marketing teams produce more content with fewer writers. Our create AI content guide details strategies for maintaining quality and brand voice.

Human-in-the-loop remains essential. Subject matter experts validate AI-generated insights, correct factual errors, and add context machines miss. This collaboration maximizes productivity gains while maintaining output quality and trustworthiness.

Comparing AI text analysis tools and techniques: choosing the right approach

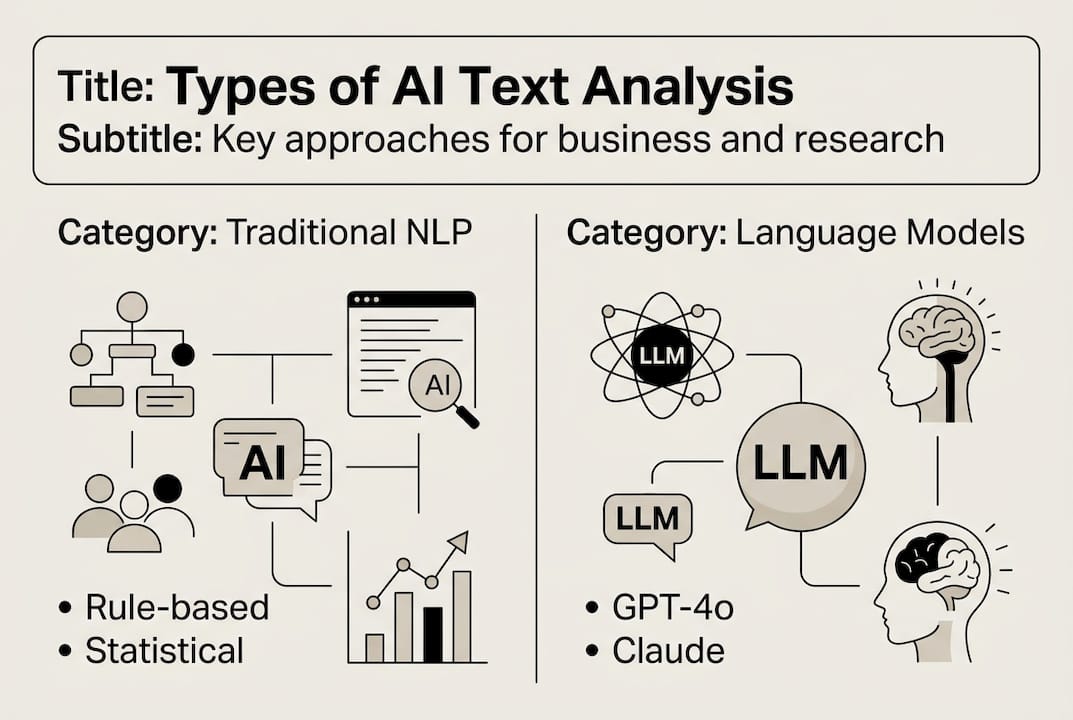

Selecting the right AI text analysis approach depends on your accuracy requirements, data complexity, and available technical resources. Three primary categories exist: traditional NLP methods, large language model-based systems, and hybrid approaches combining both.

Traditional NLP uses rule-based systems and statistical models. These methods excel at structured tasks like entity extraction from standardized documents or sentiment classification in product reviews. They require less computational power and produce predictable results. However, they struggle with ambiguous language, sarcasm, and complex reasoning tasks.

Large language models offer flexibility and contextual understanding. Systems like GPT-4o, Claude, and Gemini handle nuanced language, generate human-like text, and perform multi-step reasoning. They adapt to new tasks through prompt engineering without retraining. The tradeoff involves higher computational costs, potential hallucinations, and less transparency in decision-making processes.

Hybrid approaches combining traditional NLP with AI enhance reliability by using rule-based systems for structured extraction and LLMs for interpretation and generation. This combination delivers accuracy where it matters most while maintaining efficiency.

| Approach | Accuracy | Scalability | Complexity | Human oversight |

|---|---|---|---|---|

| Traditional NLP | High for structured tasks | Moderate | Low to moderate | Minimal for routine tasks |

| Large language models | Variable, context-dependent | High | High | Essential for validation |

| Hybrid methods | Highest overall | High | Moderate to high | Moderate, focused reviews |

| Retrieval-augmented generation | Very high for factual tasks | Moderate to high | Moderate | Lower than pure LLMs |

Retrieval-augmented generation improves factual accuracy by grounding model outputs in source documents. The system retrieves relevant passages from a knowledge base before generating responses, reducing hallucinations. This approach works well for technical documentation, research synthesis, and compliance-sensitive applications.

Tool selection considerations:

- Data type: Structured documents favor traditional NLP; unstructured, nuanced text benefits from LLMs

- Accuracy requirements: High-stakes decisions demand hybrid approaches with human validation

- Volume: Large-scale analysis justifies investment in cloud-based LLM infrastructure

- Technical expertise: Traditional NLP requires programming skills; modern LLM platforms offer no-code interfaces

- Budget: Traditional methods cost less computationally; LLMs require subscription or API fees

Pro Tip: Test multiple approaches on representative samples before committing. Measure accuracy, processing time, and cost per document. The best solution balances performance with practical constraints specific to your use case.

Voice-based applications require specialized considerations. Explore our speech recognition guide for integrating text analysis with audio processing workflows.

Open-source models like Llama and Mistral offer cost advantages for organizations with technical resources to deploy and maintain them. Proprietary models from OpenAI, Anthropic, and Google provide superior performance with managed infrastructure. Evaluate total cost of ownership including development time, infrastructure, and ongoing maintenance.

Domain-specific models fine-tuned on industry corpora outperform general-purpose systems for specialized tasks. Legal analysis, medical coding, and financial forecasting benefit from models trained on relevant professional literature. Weigh customization costs against accuracy improvements for your specific applications.

Best practices and challenges in implementing AI text analysis

Successful AI text analysis implementation requires careful planning, robust processes, and continuous improvement. Organizations that treat deployment as an ongoing optimization program achieve better results than those expecting plug-and-play solutions.

Data preprocessing significantly impacts accuracy. Clean, well-structured input text produces better outputs. Remove irrelevant formatting, standardize encodings, and handle special characters consistently. For multilingual analysis, ensure proper language detection and use models trained on relevant languages.

Human oversight remains non-negotiable. Mitigate risks like model bias and inaccuracies through fine-tuning, retrieval-augmented generation, and human-in-loop validation processes. Domain experts should review outputs, especially for high-stakes decisions affecting customers, compliance, or strategy.

Evaluation benchmarks guide tool selection and performance monitoring. Test systems against standardized datasets like GLUE, SuperGLUE, or domain-specific benchmarks. Track metrics including accuracy, precision, recall, and F1 scores. Establish baseline performance before deployment and monitor for degradation over time.

Best practices for reliable implementation:

- Start with pilot projects on well-defined, low-risk tasks to build confidence

- Document prompt templates and configuration settings for consistency

- Version control training data and model configurations for reproducibility

- Implement feedback loops where users flag errors to improve future performance

- Schedule regular model retraining as language usage and business context evolve

- Maintain audit trails for compliance and quality assurance purposes

- Set clear accuracy thresholds that trigger human review or system alerts

Common pitfalls include overestimating initial accuracy, underestimating maintenance requirements, and neglecting edge cases. Models trained on general internet text may perform poorly on specialized jargon or regional dialects. Bias in training data perpetuates in outputs, requiring active mitigation through diverse datasets and fairness testing.

Hallucinations pose significant risks. Large language models sometimes generate plausible-sounding but factually incorrect information. Combat this through retrieval-augmented generation, citation requirements, and fact-checking protocols. Never use AI-generated content in regulated contexts without expert verification.

Data privacy and security require attention. Ensure AI systems comply with GDPR, CCPA, and industry-specific regulations. Avoid sending sensitive information to third-party APIs without encryption and data processing agreements. Consider on-premise deployment for highly confidential analysis.

Change management matters as much as technology. Train users on AI capabilities and limitations. Set realistic expectations about accuracy and appropriate use cases. Celebrate early wins to build organizational buy-in while addressing concerns transparently.

Continuous improvement separates successful implementations from abandoned pilots. Collect user feedback systematically. Analyze error patterns to identify training gaps. Update prompts and fine-tuning data based on real-world performance. Compare alternative models as new options emerge.

For organizations evaluating multiple platforms, our Sophea.ai alternatives comparison helps assess features, pricing, and integration capabilities across leading solutions.

Explore AI-powered solutions with Sofia🤖

Ready to implement the AI text analysis strategies covered in this guide? Sofia🤖 provides access to over 60 state-of-the-art AI models including GPT-4o, Claude 4.0, and Gemini 2.5 through a single integrated platform. Analyze documents, generate content, and extract insights with natural voice chat, real-time streaming responses, and team collaboration features designed for business professionals and researchers.

The platform combines the flexibility of large language models with enterprise security through GDPR compliance and encryption. Custom AI profiles let you fine-tune analysis for your specific domain and use cases. Whether you're processing customer feedback, conducting literature reviews, or automating report generation, Sofia🤖 scales from individual productivity to organization-wide deployment. Explore Sofia AI-powered personal assistant to see how accessible, secure AI text analysis accelerates your workflows.

Frequently asked questions

What is AI text analysis?

AI text analysis uses artificial intelligence to automatically process, interpret, and extract insights from written language. It combines natural language processing, large language models, and neural networks to understand context, sentiment, and meaning beyond simple keyword matching. Applications include customer feedback analysis, content generation, document summarization, and data interpretation for business and research.

How does AI text analysis benefit business workflows?

Businesses gain scalability to analyze thousands of customer reviews or market reports in minutes instead of weeks. AI text analysis accelerates decision making through faster insight generation, reduces labor costs on repetitive reading tasks, and maintains consistency in evaluation criteria. Common uses include automated competitor monitoring, sentiment tracking across social channels, and report drafting from source documents.

What's the difference between traditional NLP and large language models?

Traditional NLP uses rule-based systems and statistical models for structured tasks like entity extraction. Large language models learn from billions of text examples to understand context and generate human-like responses. Hybrid approaches combine both for optimal reliability, using rules for precision and LLMs for flexibility and nuanced interpretation.

How do I ensure AI text analysis accuracy?

Implement human-in-loop validation where domain experts review outputs, especially for high-stakes decisions. Use retrieval-augmented generation to ground responses in verified sources. Fine-tune models on domain-specific data and evaluate against benchmarks like MTEB. Monitor performance metrics continuously and establish accuracy thresholds that trigger manual review.

What are common challenges in AI text analysis implementation?

Organizations face model bias from training data, hallucinations where AI generates plausible but incorrect information, and accuracy issues with specialized terminology. Address these through diverse training datasets, fact-checking protocols, and regular model retraining. Data privacy compliance and change management also require careful attention during deployment.