TL;DR:

- Modern conversational AI is proactive, capable of complex multi-system tasks, unlike simple rule-based chatbots.

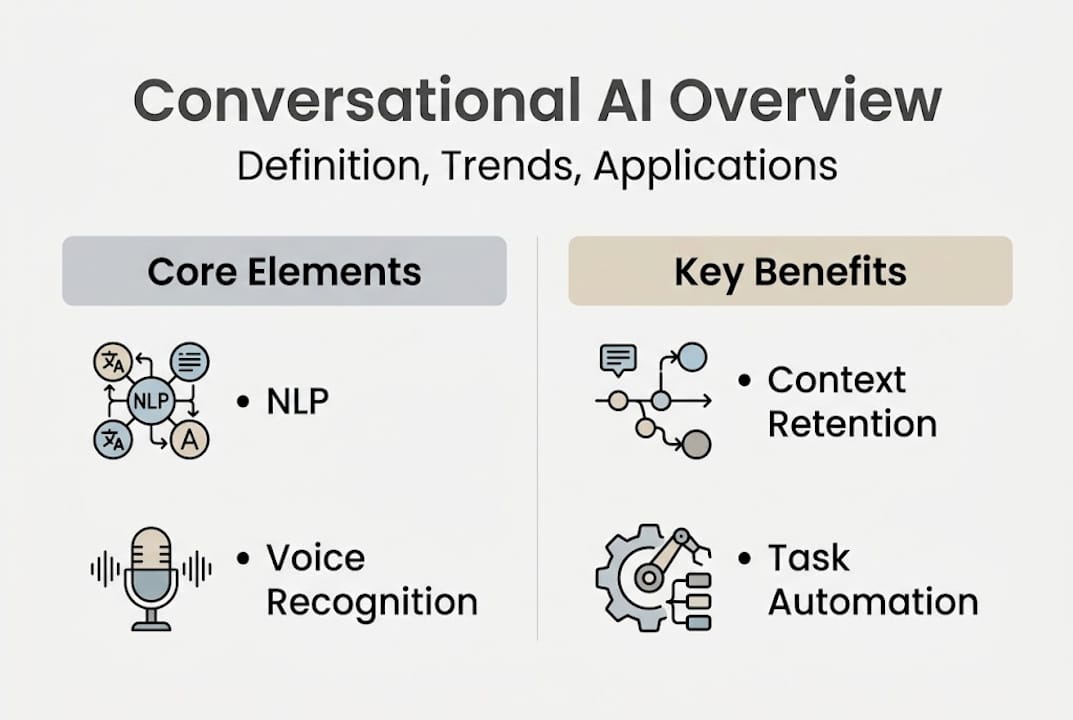

- Key components include NLP, intent recognition, multi-turn memory, speech recognition, and autonomous orchestration.

- Successful deployment relies on phased implementation, continuous monitoring, and focusing on measurable outcomes.

Conversational AI is no longer the simple rule-following bot you may have tested years ago. Gartner identifies a clear shift from reactive chatbots to agentic, multi-agent systems that integrate across enterprise tools and proactively complete complex tasks. For organizational leaders, this distinction is critical. Choosing the wrong technology tier, or misunderstanding what modern conversational AI can actually do, leads to wasted budgets and frustrated teams. This guide cuts through the noise, giving you a precise, practical understanding of what conversational AI is, how it works, where it falls short, and how to deploy it with confidence.

Table of Contents

- Defining conversational AI: Beyond chatbots

- Core capabilities: How conversational AI works

- Risks, edge cases, and human-AI collaboration

- Best practices for deploying conversational AI in organizations

- A practical perspective: What business leaders miss about conversational AI

- Explore enterprise-ready conversational AI solutions

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Conversational AI is proactive | Modern systems go far beyond chatbots by predicting needs and automating complex workflows. |

| Edge cases need attention | Misinterpreted inputs and hallucinations require smart fallback strategies and proper escalation. |

| Measure real-world impact | Track resolution rates, successful handovers, and satisfaction—not just chatbot usage. |

| Human-AI balance is key | Use humans strategically in ambiguous or safety-critical cases, not as a default for all issues. |

Defining conversational AI: Beyond chatbots

With the stage set, it's important to clarify exactly what conversational AI means in 2026. The term gets used loosely, but the technology it describes is anything but simple.

At its core, conversational AI combines natural language processing (NLP), speech recognition, and large AI models to create systems that understand human intent, maintain context across a conversation, and generate meaningful responses. That's fundamentally different from a rule-based chatbot, which follows a fixed decision tree and breaks the moment a user goes off script.

Here's a clear comparison:

| Feature | Rule-based chatbot | Conversational AI |

|---|---|---|

| Input handling | Keyword matching | NLP and intent recognition |

| Memory | None | Multi-turn context retention |

| Adaptability | Fixed scripts | Learns and adjusts dynamically |

| Integration | Limited | Connects to enterprise systems |

| Proactivity | Reactive only | Proactively initiates actions |

The most important distinction for business leaders is agentic behavior. Agentic AI proactively resolves tasks and connects multiple business systems without waiting for a human to trigger each step. Think of it less like a help desk form and more like a capable team member who anticipates what needs doing next.

Modern conversational AI platforms support:

- Multi-turn dialog with context memory across sessions

- Voice input through AI voice chat and natural speech interfaces

- Document analysis and structured data retrieval

- Integration with CRMs, calendars, project tools, and communication platforms

- Proactive task initiation based on triggers or schedules

As Gartner's Generative AI overview notes, the real value from generative and conversational AI is emerging in enterprise workflows, not consumer novelty. For decision-makers, this means the question is no longer "should we explore AI?" but "which tier of conversational AI fits our operational complexity?"

Understanding voice recognition AI as a component, for example, helps you evaluate whether a platform can handle your team's real-world communication patterns, not just typed queries.

Core capabilities: How conversational AI works

Now that we've defined what conversational AI is, let's break down how these solutions actually work behind the scenes and how they help your team.

Five components drive most enterprise-grade conversational AI systems:

- Natural language processing (NLP): Parses and interprets what a user actually means, not just what they typed.

- Intent recognition: Classifies the user's goal so the system routes the request correctly.

- Multi-turn memory: Retains context across a full conversation so users don't have to repeat themselves.

- Speech-to-text: Converts spoken input to structured text, enabling voice-driven workflows. A solid speech recognition guide can help your team evaluate accuracy benchmarks.

- Agentic orchestration: Coordinates multiple AI models or tools to complete multi-step tasks autonomously.

Here's how those components map to everyday business use cases:

| Capability | Business application |

|---|---|

| Intent recognition | Routing support tickets automatically |

| Multi-turn memory | Handling complex onboarding conversations |

| Speech-to-text | Voice-driven meeting notes and task capture |

| Agentic orchestration | Cross-system order status and fulfillment updates |

| NLP | Summarizing documents and extracting action items |

Agentic AI autonomous workflows allow proactive, AI-to-AI interactions that handle tasks across entire systems without human prompting at each step. That's a significant operational shift for teams managing high-volume, repetitive workflows.

For teams evaluating platforms, AI models for productivity vary significantly in how well they handle domain-specific language, long contexts, and multi-system orchestration. Not all models perform equally across these dimensions.

Following generative fallback best practices also matters here. When the AI doesn't recognize an intent, a well-designed fallback keeps the conversation productive rather than leaving users stuck in a loop.

Pro Tip: Start by mapping your top two or three most frequent employee or customer intents. Solving those first generates fast, measurable ROI before you expand to more complex workflows.

Risks, edge cases, and human-AI collaboration

Knowing what conversational AI can do, it's equally important to understand its real-world limits and where human intelligence remains essential.

Even the most capable systems encounter failure modes. The most common ones in enterprise deployments include:

- Hallucinations: Generative AI confidently produces incorrect information, especially outside its training domain.

- Fallback loops: The system repeatedly fails to recognize intent and cycles through unhelpful responses.

- Context degradation: In very long conversations, earlier context gets lost, causing the AI to contradict itself.

- Adversarial inputs: Users intentionally probe the system to bypass guardrails or extract unintended outputs.

- Domain-specific gaps: Technical or niche language the model wasn't trained on produces poor results.

Common edge cases include fallback loops, hallucinations, context loss, and adversarial inputs. Best practice is to limit fallback attempts to three before escalating, and to actively tune models on real conversation logs post-launch.

Neurodiversity is another underappreciated edge case. Users with dyslexia, ADHD, or non-standard communication styles may interact with AI systems in ways that trigger false negatives. Designing for these users from the start improves performance for everyone.

The human-in-the-loop debate is real, but the framing is often wrong. Most organizations default to human escalation too quickly, treating it as a safety net rather than a last resort. Overreliance on human oversight in analytical tasks can actually degrade performance, because humans introduce bias and inconsistency that a well-tuned AI avoids.

This connects to a broader point about AI collaboration: the goal is not to replace human judgment but to reserve it for situations where it genuinely adds value. Safety-critical decisions, highly creative tasks, and emotionally sensitive interactions are where humans should stay in the loop. Routine status checks and data lookups are not.

Pro Tip: Build escalation triggers based on confidence scores, not just topic categories. If the AI's confidence drops below a set threshold, escalate. If it's high, let it run.

Understanding AI model limitations before deployment prevents the most common post-launch surprises. Trust gaps, as Gartner notes on generative AI trust, remain one of the top barriers to enterprise adoption.

Best practices for deploying conversational AI in organizations

With the risks and limits in mind, here's a proven approach to successfully deploying conversational AI within your team or organization.

A structured four-step plan consistently outperforms ad hoc rollouts:

- Readiness assessment: Audit your current workflows for high-volume, repetitive interactions. Identify the top one or two intents that, if automated, would free the most time or reduce the most friction.

- Start small and prove value: Deploy a focused solution on those top intents. Resist the urge to build everything at once. A narrow, well-tuned deployment outperforms a broad, poorly tuned one every time.

- Integrate with core tools: Prioritize enterprise tool integration with your CRM, project management platform, and communication tools. Isolated AI adds friction; connected AI removes it.

- Continuous improvement loop: Collect conversation logs, review fallback triggers, and retrain on real data monthly. Post-launch tuning is where most of the long-term value is built.

Measuring success matters as much as the deployment itself. Use these metrics:

| Metric | What it tells you |

|---|---|

| Resolution rate | % of conversations fully resolved without human handover |

| Handover rate | % escalated to human agents |

| User satisfaction (CSAT) | How users rate the interaction quality |

| Fallback frequency | How often the AI fails to recognize intent |

| Time to resolution | Speed improvement versus previous process |

Two common pitfalls to avoid: over-customizing the AI before you have real usage data, and ignoring post-launch feedback from the people actually using the system. Both lead to expensive rebuilds. Following AI productivity best practices and referencing a solid AI productivity guide helps you set realistic benchmarks from day one. Gartner predicts agentic AI will autonomously resolve 80% of common service issues by 2029, but that outcome requires disciplined, iterative deployment, not a one-time launch.

A practical perspective: What business leaders miss about conversational AI

Stepping back, here's what most organizations overlook when making conversational AI a core part of their workflow.

The biggest mistake is not choosing the wrong platform. It's treating deployment as a technology project rather than a behavior change initiative. Organizations that obsess over which model is newest consistently underperform compared to those that obsess over which problem they're actually solving.

GenAI is currently in the Trough of Disillusionment, meaning the hype has peaked and real-world results are now being scrutinized. That's actually good news for leaders who focus on measurable outcomes rather than trend adoption.

The organizations winning with conversational AI in 2026 are not the ones with the most sophisticated models. They're the ones who defined success before they wrote a single line of configuration.

The leap from pilot to production is where most programs stall. A pilot that works for 50 users in a controlled setting often fails at 5,000 users with messy, real-world inputs. Planning for that gap from the start, including edge case coverage, escalation design, and feedback loops, is what separates durable deployments from expensive experiments. Explore business AI realities for a grounded view of what sustainable adoption actually looks like.

Explore enterprise-ready conversational AI solutions

If you're ready to take the next step in leveraging conversational AI for your organization, tailored solutions are available that go well beyond basic chatbot functionality.

Sofia gives your team access to over 60 state-of-the-art AI models, including GPT-4o, Claude 4.0, and Gemini 2.5, all within a single, enterprise-secure platform. Built for real organizational workflows, Sofia supports natural voice chat, document analysis, real-time streaming responses, and team collaboration tools. With GDPR compliance, enterprise encryption, and custom AI profiles, it's designed for leaders who need both power and control. Whether you're running a pilot or scaling across departments, Sofia provides the infrastructure to deploy conversational AI that actually delivers measurable results.

Frequently asked questions

What makes conversational AI different from chatbots?

Conversational AI systems are proactive, handle complex multi-step interactions, and integrate with business tools, while traditional chatbots follow fixed rules. Agentic AI manages multi-step processes and cross-system actions that rule-based bots simply cannot handle.

How does conversational AI improve team productivity?

It automates repetitive tasks, retains context across conversations, and proactively assists with scheduling, status updates, and communication without requiring human intervention at each step. Agentic AI resolves up to 80% of common issues autonomously by 2029.

What are the main risks in implementing conversational AI?

The primary risks are misinterpreted user input, generative AI hallucinations, and poorly designed escalation paths. Edge cases like fallback loops and context loss are common without proper tuning and monitoring.

How can we measure success with conversational AI?

Track resolution rate, handover percentage, and user satisfaction scores as your core metrics. Top measures include resolution rate and escalation percentage as primary indicators of deployment health.

When should humans take over from AI?

Humans should step in for safety-critical decisions, highly creative tasks, or emotionally sensitive interactions where context and judgment matter most. Human escalation adds value only when AI genuinely lacks the context or capability to ensure safety and clarity.