TL;DR:

- AI security must be a strategic priority due to growing risk exposure and potential financial losses.

- Secure AI platforms use encryption, workload isolation, and continuous monitoring to protect data and models.

- Prioritizing AI security enables faster deployment, compliance, customer trust, and operational stability.

Enterprise AI adoption is accelerating faster than most security teams can respond. 40% of enterprise applications will embed AI agents by 2026, creating an attack surface that dwarfs anything organizations have managed before. Yet many technology leaders still treat AI security as a checkbox rather than a core business strategy. The consequences of that mindset are showing up in breach reports, regulatory fines, and competitive losses. This guide breaks down the real risks, the proven defenses, and the business case for treating secure AI as a strategic priority rather than an IT afterthought.

Table of Contents

- The hidden risks of unsecured AI platforms

- How secure AI platforms protect business value

- The evolving threat landscape: Agentic AI and security challenges

- Business benefits of prioritizing secure AI adoption

- Why most organizations misunderstand secure AI—and what to do instead

- Ready to secure your AI journey?

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI risk multiplies at scale | Unsecured AI platforms expose sensitive data and create new vectors for costly breaches as adoption grows. |

| Security is business-critical | Securing AI protects data, intellectual property, and organizational reputation—it's more than an IT concern. |

| Modern threats need new defenses | Agentic AI introduces attacks like prompt injection, requiring purpose-built protections beyond traditional tools. |

| Secure AI enables growth | Companies with secure AI can innovate confidently, earn customer trust, and maintain regulatory compliance. |

The hidden risks of unsecured AI platforms

When AI adoption scales, so does the exposure. Most enterprises are now routing customer records, financial models, legal documents, and proprietary intellectual property through AI platforms daily. That data concentration creates a target that attackers find extremely attractive.

Enterprise AI systems process sensitive data including customer records, financial information, and IP, meaning a single exposure can cascade across multiple connected systems simultaneously. Unlike a traditional database breach, an AI platform compromise can corrupt model outputs, manipulate decision logic, and silently exfiltrate data over extended periods before anyone notices.

The financial stakes are rising sharply. Shadow AI breaches now cost enterprises an average of $4.63 million per incident, up $670,000 over the past year alone. Shadow AI refers to employees using unauthorized AI tools that bypass organizational controls, creating invisible data pipelines that security teams cannot monitor.

The most common risk categories business leaders need to understand include:

- Data exposure: Sensitive inputs fed into AI models can be logged, cached, or leaked through misconfigured APIs

- Model theft: Proprietary fine-tuned models represent significant investment and can be extracted by adversaries

- Regulatory non-compliance: GDPR, CCPA, and emerging AI-specific regulations impose strict obligations on how AI processes personal data

- Agentic AI risk: Autonomous AI agents that take actions on behalf of users dramatically expand the attack surface

- Supply chain vulnerabilities: Third-party model providers and plugins introduce risks that are difficult to audit

"The moment an AI agent can browse the web, execute code, or send emails autonomously, it becomes a potential vector for privilege escalation and data exfiltration that traditional security tools were never designed to catch."

For a deeper look at how to approach these challenges systematically, AI security best practices offer frameworks that go well beyond basic firewall configuration. Organizations also need to recognize that AI for productivity gains are only sustainable when the underlying platform is trustworthy. Teams relying on AI-powered data analysis for business decisions need confidence that those outputs haven't been tampered with.

How secure AI platforms protect business value

Knowing the risks is only useful if you know what to look for in a solution. Secure AI platforms are not simply traditional software with a privacy policy attached. They are architecturally designed to contain threats at every layer of the AI stack.

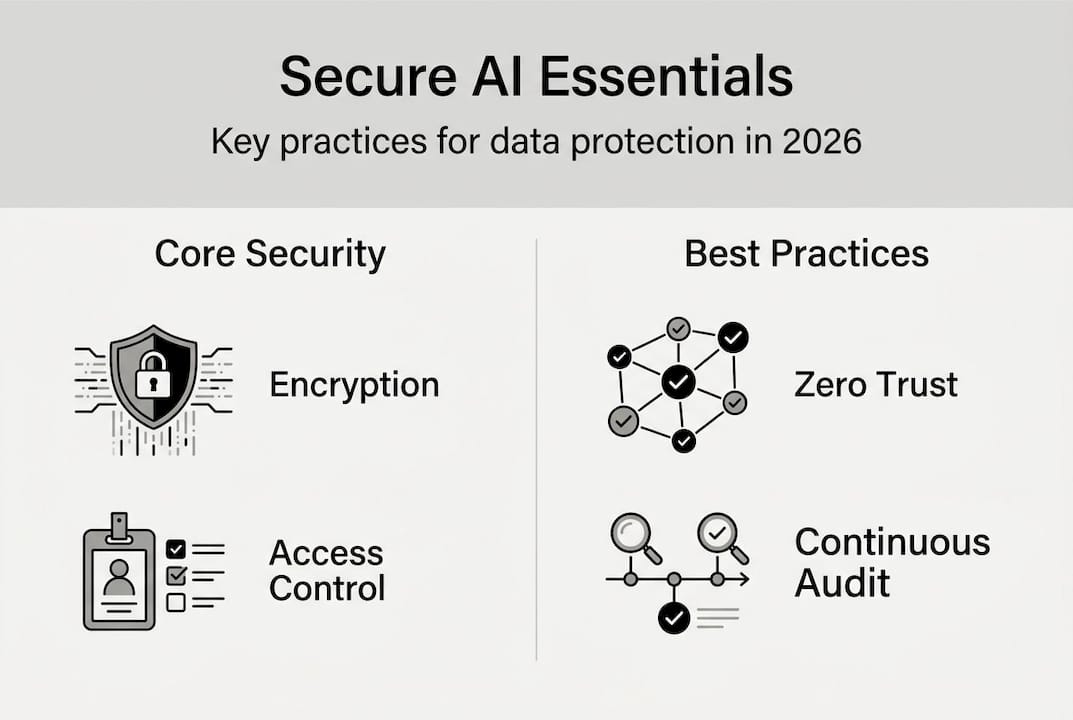

The core protective mechanisms that separate genuinely secure platforms from marketing claims include:

- End-to-end encryption: Data must be encrypted in transit and at rest, with key management that prevents even the platform provider from accessing raw inputs

- Workload isolation: Each organization's AI workloads run in isolated environments, preventing cross-tenant data leakage

- Identity and access management: Granular role-based controls ensure only authorized users can access specific models, data, or outputs

- Confidential computing: Hardware-level protections ensure AI processes data securely, even shielding it from cloud infrastructure providers

- Continuous monitoring and audit logging: Every model interaction is logged, enabling compliance reporting and anomaly detection

Confidential computing and Zero Trust security are now recommended as baseline requirements for enterprises scaling AI systems, not optional enhancements. Zero Trust means no user, device, or process is trusted by default, even inside the network perimeter.

Pro Tip: When evaluating AI platforms, ask vendors specifically about their runtime protection capabilities. Many platforms offer strong perimeter security but lack visibility into what happens inside the model inference pipeline, which is where sophisticated attacks now occur.

Here is a quick comparison of security feature maturity levels to help you evaluate vendors:

| Security feature | Basic tier | Enterprise tier |

|---|---|---|

| Data encryption | In transit only | In transit and at rest |

| Access controls | Shared credentials | Role-based, per-user |

| Audit logging | Limited | Full interaction logs |

| Compliance certifications | None | GDPR, SOC 2, ISO 27001 |

| Workload isolation | Shared infrastructure | Dedicated environments |

Understanding data privacy in AI is essential before signing any enterprise AI contract. Teams using AI for document analysis need particular assurance that uploaded files are not retained or used for model training without explicit consent.

The evolving threat landscape: Agentic AI and security challenges

The threat environment is not static. The emergence of agentic AI, systems that can plan, reason, and execute multi-step tasks autonomously, has introduced attack vectors that most security frameworks were not built to handle.

Agentic AI amplifies risks such as prompt injection and data exfiltration, as demonstrated by the rapid compromise of McKinsey's internal AI assistant, Lilli, which was breached in under two hours during a security exercise. That speed is sobering. A human attacker exploiting a traditional system typically needs days or weeks. An AI agent operating at machine speed collapses that timeline dramatically.

The key emerging attack techniques targeting agentic AI include:

- Prompt injection: Malicious instructions embedded in data the AI reads, causing it to take unintended actions

- Privilege escalation: Agents granted broad permissions can be manipulated into accessing resources far beyond their intended scope

- Data exfiltration: Agents with internet access can be tricked into sending sensitive data to external endpoints

- Memory poisoning: Long-term memory features in advanced agents can be corrupted to influence future behavior

Two competing approaches to agent safety have emerged in the industry:

| Approach | Constitutional AI | RLHF (Reinforcement Learning from Human Feedback) |

|---|---|---|

| Core mechanism | Rules-based behavioral constraints | Human-rated feedback shapes model behavior |

| Strength | Predictable, auditable guardrails | Flexible, adapts to nuanced scenarios |

| Weakness | Can be circumvented by novel prompts | Dependent on quality of human feedback data |

| Best use case | High-stakes regulated environments | General-purpose assistants |

Pro Tip: For any agentic AI deployment, apply the principle of least privilege rigorously. Each agent should have access only to the specific tools and data it needs for its defined task, nothing more. This single practice eliminates a large percentage of escalation attack paths.

Staying current on conversational AI trends helps security teams anticipate where agentic capabilities are heading and prepare defenses before new attack techniques become widespread.

Business benefits of prioritizing secure AI adoption

Security investment in AI is often framed as a cost. That framing is wrong. Organizations that build security into their AI strategy from the start consistently see stronger business outcomes than those that bolt it on after incidents occur.

Secure AI enables enterprises to scale AI productively while defending data and intellectual property and avoiding compliance penalties that can reach millions of dollars per violation under GDPR and CCPA.

The concrete business benefits of prioritizing secure AI adoption include:

- Faster, more confident AI rollout: Teams adopt AI tools more quickly when they trust the platform, reducing the shadow AI problem at the source

- Customer and partner trust: Enterprise clients increasingly require security certifications before sharing data with AI-powered vendors

- Competitive differentiation: Secure-by-design AI is becoming a procurement requirement, not a nice-to-have, in regulated industries like finance, healthcare, and legal services

- Reduced legal exposure: Proactive compliance with GDPR, CCPA, and emerging AI regulations prevents fines and litigation that can dwarf the cost of security investment

- More reliable AI outputs: When data pipelines are clean and protected from tampering, the decisions AI supports are more trustworthy and defensible to stakeholders

- Operational continuity: Secure platforms reduce the risk of AI-related outages or incidents that disrupt core business processes

Organizations evaluating AI model types for productivity should include security architecture as a primary selection criterion alongside capability benchmarks. A highly capable model running on an insecure platform is a liability, not an asset.

Why most organizations misunderstand secure AI—and what to do instead

Here is the uncomfortable reality: most organizations are layering traditional cybersecurity tools onto AI systems and calling it done. Firewalls, endpoint detection, and VPNs were designed for a world where data sat in defined locations and humans initiated every action. AI breaks both of those assumptions.

Agent security requires visibility and runtime protection, which is fundamentally different from perimeter-based thinking. An AI agent operating inside your approved network can still exfiltrate data, manipulate outputs, or escalate privileges without ever triggering a traditional intrusion detection alert.

The mindset shift that actually works is treating security as a first principle in AI design, not a layer added afterward. That means embedding access controls into model deployment pipelines, monitoring inference activity in real time, and treating every agent action as a potential audit event. Security teams need to be involved at the model selection stage, not called in after a breach.

Security is not a constraint on AI-driven innovation. It is the foundation that makes sustained innovation possible. Organizations that internalize this will move faster and with more confidence than those still treating it as overhead.

Ready to secure your AI journey?

The gap between AI ambition and AI security is where most enterprise risk lives right now. If your organization is scaling AI adoption without a clear security architecture, the question is not whether an incident will occur but when.

Sofia🤖 is built with enterprise-grade security at its core, including GDPR compliance, enterprise encryption, and privacy controls designed for organizations that cannot afford to compromise on data protection. With access to over 60 leading AI models in a single secure platform, your teams get the productivity gains without the security trade-offs. Explore Sofia for secure AI and see how a purpose-built secure AI platform can accelerate your organization's AI strategy safely and confidently.

Frequently asked questions

What makes an AI platform 'secure'?

A secure AI platform uses strong encryption, access controls, and continuous monitoring to prevent data leaks, unauthorized model access, and compliance violations across every layer of the AI stack.

Why are standard cybersecurity tools not enough for AI?

Traditional tools protect network perimeters and endpoints, but model theft and pipeline risks require runtime controls that monitor inference activity and data flows inside the AI system itself.

How can agentic AI increase security risks?

Autonomous AI agents can be manipulated through prompt injection and privilege escalation, allowing attackers to redirect agent actions or extract sensitive data without triggering conventional security alerts.

What are the business benefits of securing AI platforms?

Secure AI platforms allow organizations to scale AI productively while protecting data and intellectual property, building customer trust, and maintaining compliance with data regulations like GDPR and CCPA.