96% of organizations report ROI from privacy investments, yet 64% worry about leakage in generative AI systems. That gap tells you everything about where the industry stands right now. Privacy is no longer a compliance checkbox. It is a strategic function that directly affects trust, liability, and competitive advantage. This guide cuts through the noise to give you clear definitions, a breakdown of the regulatory landscape, proven protection strategies, and the edge cases that catch even experienced teams off guard.

Table of Contents

- Defining data privacy in AI

- Data privacy regulations and frameworks for AI

- Privacy methodologies and protection strategies in AI

- Edge cases, risks, and the reality of AI privacy challenges

- Best practices for maintaining privacy in AI systems

- Leverage AI privacy tools for your organization

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI privacy is lifecycle-driven | Managing data privacy in AI requires covering risks from collection to deployment and inference. |

| Regulations vary globally | EU AI Act and GDPR contrast sharply with US sector-specific rules and NIST voluntary frameworks. |

| Technical strategies are vital | Privacy-by-design, federated learning, and differential privacy are leading techniques for real protection. |

| Edge risks persist | Attacks like model inversion and prompt injection demand layered defenses beyond regulatory compliance. |

| Best practices boost ROI | Proper privacy processes not only safeguard data but deliver measurable returns and compliance assurance. |

Defining data privacy in AI

Data privacy in AI is not simply about keeping files secure. It covers the entire lifecycle of personal data, from the moment it is collected for training, through processing and model development, all the way to inference and deployment.

Data privacy in AI includes lawful, secure handling of personal data from collection to deployment and inference, requiring organizations to respect individual rights at every stage.

Traditional privacy frameworks were built around databases and static records. AI changes the equation. Models can memorize training data, generate outputs that expose personal details, and make inferences about individuals that were never explicitly shared. That is a fundamentally different threat surface.

The key stakeholders affected span three groups:

- Individuals whose data is used to train or run AI systems

- Enterprises that build, deploy, or procure AI tools

- Regulators who set the rules and enforce accountability

Understanding how AI models work at a technical level helps organizations recognize where privacy risks actually emerge. The lifecycle perspective is what separates mature AI privacy programs from reactive ones.

Data privacy regulations and frameworks for AI

The regulatory landscape for AI privacy is fragmented but converging fast. Professionals need to know which frameworks apply to their operations and what each one actually demands.

Key regulations shaping AI privacy include the EU AI Act, GDPR, CCPA/CPRA, HIPAA, the NIST AI Risk Management Framework, and FTC Section 5 enforcement actions. Each takes a different approach.

| Regulation | Scope | Enforcement style | Core requirement |

|---|---|---|---|

| EU AI Act | EU market, risk-based tiers | Fines up to 7% global revenue | Conformity assessments for high-risk AI |

| GDPR/UK GDPR | EU/UK personal data | Supervisory authority fines | Lawfulness, minimization, data subject rights |

| CCPA/CPRA | California residents | AG and CPPA enforcement | Opt-out rights, data deletion, transparency |

| HIPAA | US health data | HHS civil and criminal penalties | Safeguards for protected health information |

| NIST AI RMF | US voluntary framework | Guidance-based | Govern, Map, Measure, Manage functions |

The EU AI Act and US approaches differ significantly. The EU uses a prescriptive, risk-tiered model. The US relies more on sector-specific rules and FTC enforcement signals. Neither is inherently better, but operating across both jurisdictions requires deliberate planning.

The NIST AI RMF deserves special attention. Its four functions, Govern, Map, Measure, and Manage, give organizations a structured way to build trustworthy AI without waiting for legislation to catch up. Aligning with best AI practices from the start makes regulatory adaptation far less painful.

The FTC has made clear that deceptive or unfair AI data practices fall squarely within its Section 5 authority, and enforcement actions are accelerating.

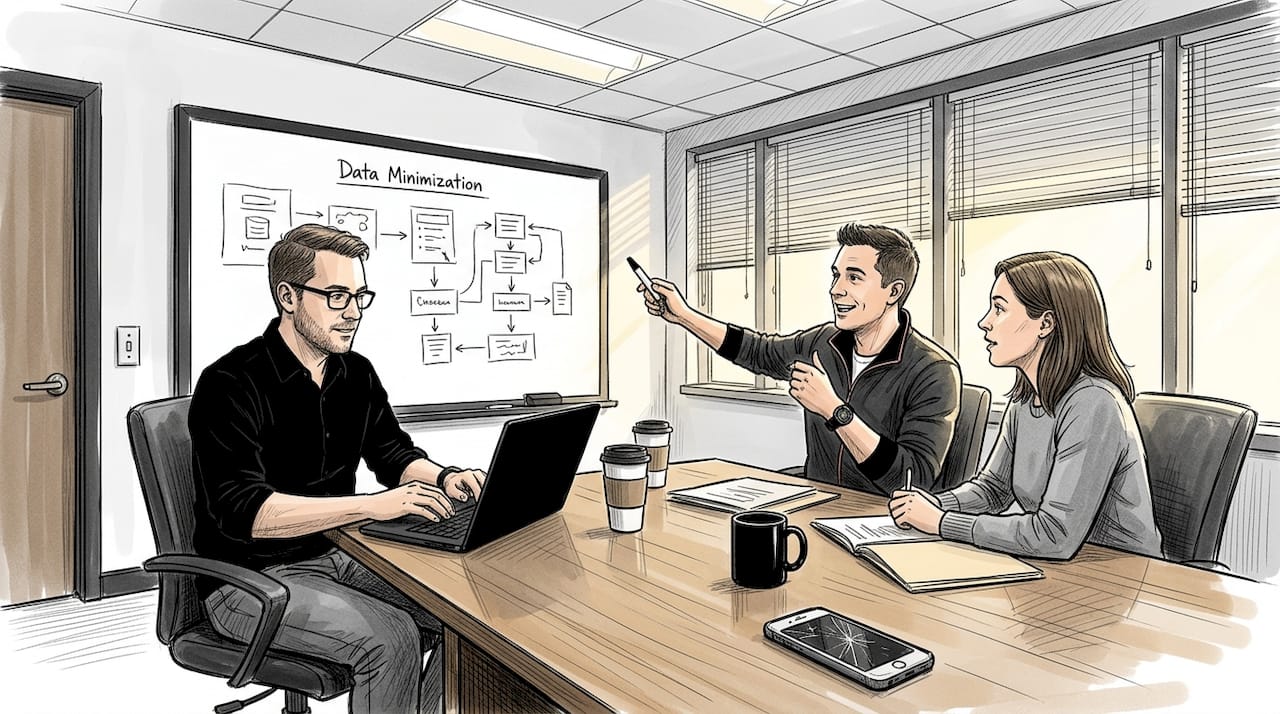

Privacy methodologies and protection strategies in AI

Knowing the regulations is one thing. Implementing the right technical and procedural controls is where organizations actually protect themselves and their users.

Core privacy mechanics for AI include privacy-by-design, data minimization, de-identification, differential privacy, federated learning, encryption, and machine unlearning. Each serves a distinct purpose in the protection stack.

Here is how the main methodologies compare:

| Methodology | Description | Benefits | Trade-offs |

|---|---|---|---|

| Privacy-by-design | Embed controls before development begins | Reduces retrofit costs | Requires upfront planning |

| Differential privacy | Adds statistical noise to outputs | Protects individual records | Can reduce model accuracy |

| Federated learning | Trains models on local data without centralizing it | Minimizes data exposure | Higher compute overhead |

| Encryption | Protects data at rest and in transit | Strong baseline protection | Key management complexity |

| Machine unlearning | Removes specific data influence from trained models | Supports right to erasure | Technically immature at scale |

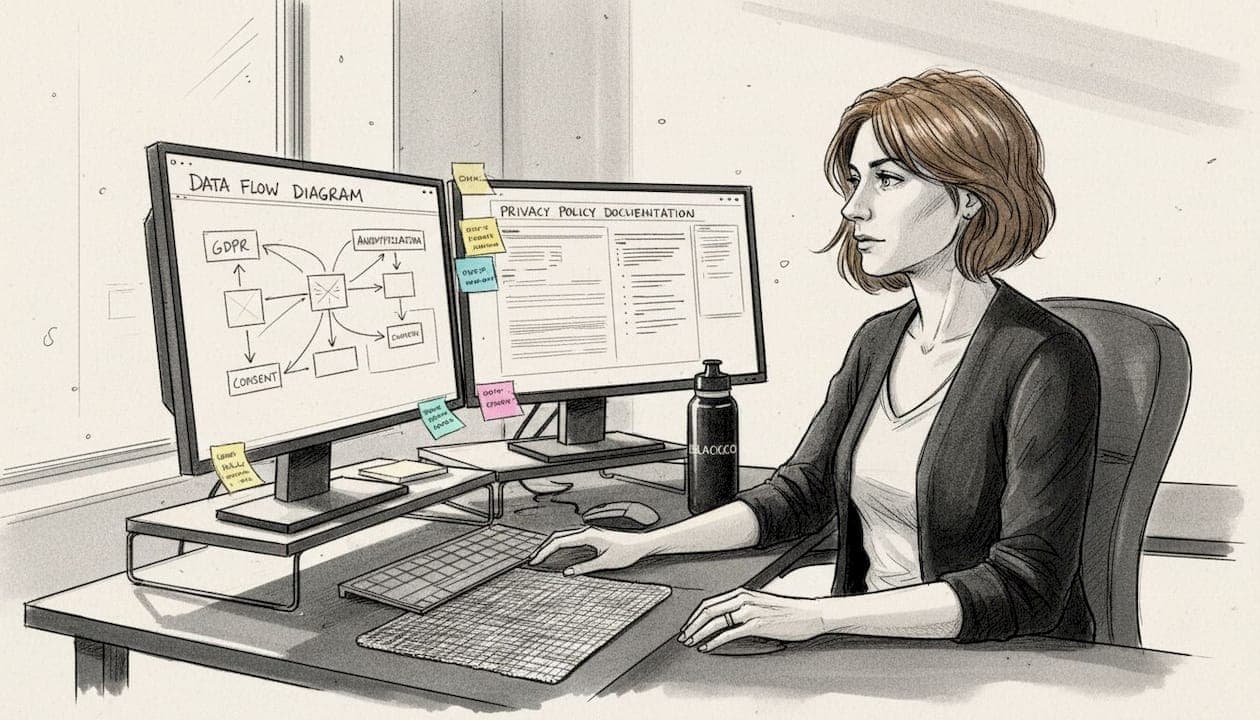

The practical steps for building a privacy-protective AI system follow a clear sequence:

- Conduct a Data Protection Impact Assessment before any training begins

- Apply data minimization to limit what personal data enters the pipeline

- De-identify or pseudonymize training datasets wherever possible

- Implement differential privacy or federated learning based on your risk profile

- Encrypt data at rest, in transit, and during processing

- Plan for machine unlearning from the architecture stage, not after deployment

Embedding privacy controls from the design stage and using federated learning are specifically recommended for EU AI Act and GDPR compliance. Retrofitting these controls after a model is trained is expensive and often incomplete.

Pro Tip: Start your privacy risk assessment before you touch training data. The cost of fixing privacy gaps post-deployment is typically 10 to 100 times higher than addressing them at the design stage.

For teams using AI document analysis tools, applying input redaction and access controls at the document ingestion layer is a critical first line of defense. The same logic applies to any AI for business workflow that processes sensitive records.

Edge cases, risks, and the reality of AI privacy challenges

Even well-designed systems face attack vectors and edge cases that standard controls do not fully address. Understanding these is not optional for professionals managing high-stakes AI deployments.

Key AI privacy risks include model inversion attacks, membership inference attacks, prompt injection, cross-jurisdiction compliance conflicts, machine unlearning failures, and de-identification failures. Each one can undermine a privacy program that looks solid on paper.

Here is what each threat actually means in practice:

- Model inversion attacks: An adversary queries a model repeatedly to reconstruct sensitive training data, such as medical images or financial records

- Membership inference attacks: An attacker determines whether a specific individual's data was used in training, which itself can be a privacy violation

- Prompt injection: Malicious inputs manipulate an LLM into revealing system prompts, user data, or confidential context

- De-identification failures: Supposedly anonymized data gets re-identified when combined with external datasets, a risk that grows as public data sources multiply

- Cross-jurisdiction conflicts: A model trained under GDPR rules may still violate CCPA or HIPAA when deployed in a different context

The privacy-utility trade-off is real. Adding differential privacy noise improves protection but can degrade model performance on edge cases. Federated learning reduces data centralization but increases infrastructure complexity. There is no free lunch, and pretending otherwise leads to underprotected systems.

For teams managing document review workflows, human-in-the-loop oversight is especially important when AI processes legally sensitive or personally identifiable content.

Pro Tip: Layer your defenses. Differential privacy, federated learning, and encryption each address different attack surfaces. Using layered AI defenses together closes gaps that any single method leaves open.

Best practices for maintaining privacy in AI systems

Vulnerabilities are real, but they are manageable with the right protocols in place. The organizations that get this right treat privacy as an ongoing operational discipline, not a one-time project.

A practical compliance checklist for AI privacy programs includes these sequential steps:

- Inventory and classify all personal data used in AI training and inference pipelines

- Conduct DPIAs for every high-risk AI application before deployment

- Implement access controls using least-privilege principles across all AI systems

- Establish consent mechanisms that are specific, informed, and revocable

- Test for privacy vulnerabilities including adversarial inputs and re-identification risks

- Support data subject rights with automated workflows for access, deletion, and correction requests

- Apply human oversight on any AI system making consequential decisions about individuals

For LLM-specific deployments, additional controls matter:

- Use prompt filters to block sensitive data from entering model context windows

- Apply input redaction to strip personally identifiable information before processing

- Log and audit all model interactions for anomalous data access patterns

- Use synthetic data for testing and fine-tuning wherever real personal data is not strictly necessary

Best practices from the ICO's AI audit framework reinforce inventory, DPIAs, consent, access controls, rights support, synthetic data use, and human oversight as the foundation of any credible AI privacy program.

The numbers back up the investment. 86% of people support dedicated privacy laws, and 96% of organizations report positive ROI from their privacy programs. Yet persistent gaps remain, particularly around generative AI and cross-border data flows.

Continual monitoring is non-negotiable. Privacy risks evolve as models are updated, new data sources are added, and threat actors develop more sophisticated techniques. Connecting real-time AI advantages to your monitoring stack helps teams catch anomalies before they become incidents. Similarly, AI-assisted content workflows benefit from embedded privacy checks at every stage of the content pipeline.

Leverage AI privacy tools for your organization

Understanding the principles is the first step. Putting them into practice at scale requires tools built with privacy as a core feature, not an afterthought.

Sofia🤖 is built with GDPR compliance, enterprise encryption, and privacy controls embedded from the ground up. Whether your team is analyzing sensitive documents, running LLM workflows, or collaborating across departments, the platform gives you access to over 60 state-of-the-art AI models without compromising on data protection. For organizations navigating complex regulatory environments, having a trusted AI platform that aligns with best AI privacy practices removes a significant operational burden. Explore how Sofia🤖 can support your compliance goals while keeping your teams productive and your data secure.

Frequently asked questions

What's the main difference between AI data privacy and traditional data privacy?

AI systems introduce unique risks from collection through inference, including model memorization and adversarial attacks, that traditional privacy frameworks were never designed to address. AI data privacy requires lifecycle-wide controls and model-specific mitigation strategies.

Which regulation is most important for high-risk AI systems?

The EU AI Act is the most critical framework because it bans unacceptable-risk AI and mandates strict conformity assessments, transparency, and human oversight for high-risk applications like biometrics and automated hiring decisions.

How can organizations prevent sensitive data leakage in AI?

Federated learning and prompt filters are among the most effective technical controls, combined with privacy-by-design architecture and continuous monitoring to catch leakage before it escalates.

What are model inversion and membership inference attacks?

Model inversion and membership inference are adversarial techniques where attackers either reconstruct training data from model outputs or determine whether a specific individual's data was included in training, both of which constitute serious privacy violations.

Do privacy investments really pay off for organizations?

Yes. 96% of organizations reported positive ROI from their privacy programs in Cisco's 2025 benchmark study, making privacy investment one of the most consistently rewarded areas of enterprise risk management.